Anyone who has tossed a coin knows that it is a priori very hard to predict the outcome. All the same, obtaining nine hundred consecutive tails in one thousand tosses, though extremely unlikely, is not impossible—and this can occur with no prior intimation. This simple example is representative of a great many processes in nature.

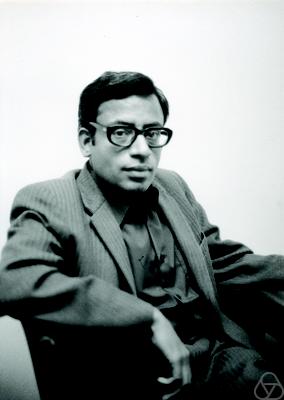

In a career spanning over fifty years, S.R.S. Varadhan, the Frank Jay Gould Professor of Science at the Courant Institute of Mathematical Sciences at New York University, has made diverse contributions in analysis, probability theory and stochastic processes. He is a recipient of many prestigious honours and his work on large deviations in probability theory was recognized with the Abel Prize in 2007.

In this conversation with Sudhir Rao and R. Mohan, Varadhan talks about large deviation theory, its connections with physics and its applications to phenomena ranging from bankruptcies to tsunamis, and also reminisces on the early influences on his career and his everyday life as a mathematician.

School and College

Your childhood was a time of some of the most historic moments of our country—the struggle for independence, freedom from colonial rule, and the excitement, anxiety and aspirations that followed. What was it like, growing up in the 1940s and ’50s in India?

SRSV: Well, in the ’40s, I was a child. So I have only memories of one or two incidents. I remember Independence Day because I was in fourth or fifth grade at that time. We went to school though it was a holiday. We were in our classrooms and we were asked to go out. We went out and they gave a laddu to each one of us. Then we took the flag and marched to the town, a suburb of Chennai where my parents lived. That’s what I remember of that day. And then I remember Gandhi’s assassination. I think his ashes were taken all round the country in a special train and we were near the train track; I remember going with some other children and some parents to watch the train go by. And then, when the Republic day came a few years later, I remember having a function at school, raising the flag and so on. Those are the memories I have of that period.

Did you have any siblings?

SRSV: No.

You are the lone child. What was the name of the school you went to?

SRSV: Well, I went through different schools. My father was a high-school teacher in the district board school system of Chengalpattu district, so he was transferred from one school to another several times. They were always board high schools [that were managed by each district]. There were three different towns in which he served during this period. One was a board high school in Satyavedu. It may be part of Andhra now—at that time it was already very bilingual [Telugu and Tamil]. The second one was a board high school in Ponneri. And the third school was a board high school in Uthiramerur.

You were a high school and college student in the 1950s. How was it during the ‘50s?

SRSV: High school was mainly fun. In the sense that the demand [on us] was not much. Some homework was there, especially in math. In other subjects I don’t think we had any homework. It was five or ten minutes of work and that was it. The rest of the time after school was spent playing with other kids. We did not have any equipment but we could always improvise and pretend we were playing cricket—we could use any ball and any object for a bat. We were on a river bed—the town was close to a river—which, maybe, had water two or three times a year when it rained. The rest of the time it was just sand, so we would go and play on the sand. That’s how we spent our days. So school itself was not that demanding.

What interested you during your school days?

SRSV: During my childhood, I initially wanted to be a doctor, you know. But then I went to a medical college exhibition once.

Which year was this?

SRSV: Maybe when I was in seventh grade or something like that. My cousin and I went to the exhibition and I saw all the bodies that were cut up and so on. So I said “This is not for me.” [Laughs] And my cousin got a fever that lasted for three days after looking at this.

It was a truly mortifying experience!

SRSV: Definitely a mortifying experience. After that, my math teacher was the one who got me interested in mathematics and that’s how it developed. But I was always interested in science in some form or the other. If it were not mathematics, it would have been physics or chemistry.

Would you remember the name of this mathematics teacher in school?

SRSV: Swaminatha Iyer.

I was always interested in science in some form or the other

If it were not for him, how would your relationship with mathematics be?

SRSV: I was not afraid of mathematics like many students are. I didn’t have to work hard at it—it came very easily to me. I was not that much interested in other subjects like literature or social sciences and so on. My interest was mainly in mathematics and basic physical sciences. In school I did well in all of them, and when I went to college I initially took mathematics, physics and chemistry as my subjects. And all that the teacher told me was that statistics was an important subject, that it would become important, and so I should try to study statistics. When I finished my junior college—in those days you did two years of Intermediate after high school—I had a chance to go to an honours programme. I didn’t want to take mathematics honours; instead, I opted for statistics honours. The other alternative was mathematics and physics. I had admission for both physics and statistics, and I took statistics. I think if he had not told me about statistics, I would probably have gone into physics.

The Fields Medallist William Thurston has this incident to recall where his father would ask him questions while they were driving from one place to another. One such occasion when he asked, “Bill, what is the sum of the first one hundred positive integers?”, Thurston is supposed to have done the math in his head and come up with his own answer. Did you have some such experience of juggling with numbers, trying to understand shapes? Anything of that sort?

SRSV: No…the only experience of that kind I had was as a child when I used to play chess. My mother taught me chess at a very young age—I think at the age of three or four. I used to play chess with my mother and somehow that developed the idea of planning ahead and seeing that it’s not just the current position you have to imagine but future positions too. So that ability was developed during that period.

So, in some sense, some logical thinking was always very dear to you and you understood early on that you had the capacity to do that?

SRSV: Yeah.

When were you at Presidency College, Chennai?

SRSV: 1956–59.

Kolmogorov’s formalism was already around at that time. Were you aware of Kolmogorov’s measure theoretic probability?

SRSV: No. I didn’t know there were singular distributions at all until R R. Bahadur gave a course much later at ISI [Indian Statistical Institute]. [Laughs]

During your student days at Presidency College, was there anything that suggested to you that you could pursue mathematics seriously?

SRSV: Statistics was always interesting. Statistics had two parts. One was theoretical statistics, which mainly is just probability—calculating the distributions of various things, estimation, testing. These are all really mathematical in some sense. And then there are descriptive things like sampling and so on in which there is mathematics, but there is some other thinking also involved. I liked them both.

In the ISI structure in those days, we had no courses but only some lectures

On your 60th birthday celebrations, Professor V.S. Varadarajan mentions in his talk that you had had a spectacular career in Madras University during your Master’s—that you scored the highest in the history of that university. But your initial aspiration was to study industrial statistics. Why was that?

SRSV: You know, when I finished my Master’s and took the exam for ISI, and was admitted as a research scholar, I had no idea what research meant. And in the ISI structure in those days, in Kolkata, we had no courses but only some lectures. Varadarajan gave some lectures on point-set topology and Bahadur gave some lectures on basic measure theory. Other than that we had no lectures—no lectures in probability. I had no idea about Kolmogorov consistency, Brownian motion or any of these Markov processes. None of these subjects were taught. So I had no idea what areas were open in terms of research. My idea was maybe one of the things one could do after getting a Ph.D. in statistics is to work in the industry because I had heard about operations research, quality control and things like that. I had an idea that maybe that’s what I should do. That interest lasted only three or four months, because intellectually, I was not satisfied.

ISI Days

Please tell us more about your days at ISI and about the legend of the famous four [Varadhan, R. Ranga Rao, V.S. Varadarajan and K.R. Parthasarathy].

SRSV: It was interesting. I think there was very little time when the famous four were all there [together]. I joined in September 1959 after Varadarajan had already completed his Ph.D. Some time later—I think November or December—he left to go to the United States. So it was just three of us—Ranga Rao, [K.R.] Parthasarathy, and I. We were together for a couple of years and did some work together. Ranga Rao left to go to the US, Parthasarathy left to go to Russia. Later, Varadarajan came back to ISI, and Varadarajan and I were together for a year. We tried to do something in group representations and then I left to go to the US. So it was sort of that the four of us were there at different times and not at the same time. [Laughs]

There is this legendary story of your own thesis defense with Professor Kolmogorov as your examiner…

SRSV: I think that story has been transformed many times. [Laughs] The actual truth is that Kolmogorov was at ISI for a long visit, maybe for six weeks or two months or so. I was completing my thesis at that time. C.R. Rao asked him if he would be one of the examiners of my thesis and he agreed. He wanted to take the thesis with him back to Moscow and write a report from there—and that’s what he did. But the report did not come for quite a long time. And then KRP [Parthasarathy] went to Moscow and nudged him, and then the report came.

In his report, Kolmogorov is said to have remarked that it was not the work of a student, but of a mature master…

SRSV: I am not officially aware of the report because it is confidential. Some people who have read the report say something [along those lines]. I guess that’s true. I have never seen the report myself though! [Laughs]

Did the journey to ISI Kolkata involve any career decision dilemmas?

SRSV: No. My other option was to take the IAS [Indian Administrative Service] exam. That was always the option that was open. And I was young enough that, in principle, I could take it even after getting my Ph.D. So that’s what I told my parents—that they should not think that somehow I was going down a blind alley in this research business, that I could always take the IAS exam later on.

Did it comfort you that you had this backup plan?

SRSV: I didn’t really think about it. You know, I enjoyed being in Kolkata. In my first year there, I was doing something that wasn’t interesting to me—quality control and so on. Applied statistics didn’t appeal to me. So I had a lot of free time which I spent by playing badminton, playing bridge [laughs], until KRP one day told me, “You are wasting your time. Better learn some math.” [Laughs]

My other option was to take the IAS [Indian Administrative Service] exam

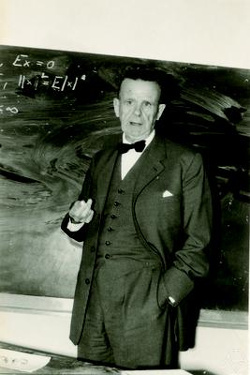

You entered ISI Kolkata at a time when both Professor Mahalanobis and Professor Rao were very active there. Your memories of life as a student at ISI Kolkata?

SRSV: There are always funny things that happen at ISI. Prof. Mahalanobis once decided that he wanted to give a course on mathematics; some part of mathematics—set theory or something like that. Of course, if the Professor wanted to give a course, we all had to attend. There was no alternative. In the class, when he was talking about sets and so on, he wanted to define an empty set. So he said that the number of tigers in that classroom was an empty set. [Laughs] I always thought that was funny.

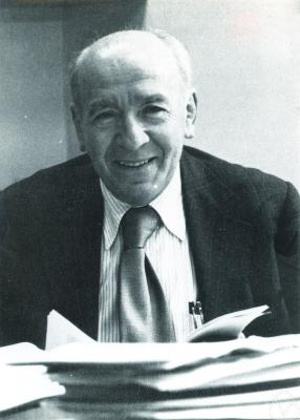

I also remember that Fisher used to visit ISI.

Ronald Fisher?

SRSV: Ronald Fisher. And he always talked about the same thing—fiducial inference and so on. The research scholars got tired of listening to the same thing again and again, so they wouldn’t go. But C.R. Rao had to make sure that Fisher had an audience. So we had some people working in various offices who were not really faculty but who were sort of calculating stuff and so on. He rounded up a bunch of them, and made them sit in Fisher’s lecture. One of them was distracted and didn’t pay attention. Fisher got angry at him: “So what do you think? That I am an idiot talking nonsense?” [Laughs]

How often did you meet C.R. Rao, your thesis advisor, and what were his inputs?

SRSV: I think I would go and tell him what I was doing, maybe once in six months or something like that. He would say, “You are doing good. Go ahead.” He was not that much into what we were doing but he was aware that what we were doing was good.

Since he was assured that you were on the right track, he was kind of hands-free?

SRSV: He had confidence in the team—about Varadarajan, Parthasarathy, Ranga Rao and myself. He had confidence in us.

What about your interactions with Prof. Mahalanobis?

SRSV: We never saw Prof. Mahalanobis. He was never there, but would show up once in a while.

He [C.R. Rao] had confidence in the team—about Varadarajan, Parthasarathy, Ranga Rao and myself

But he was the Director?

SRSV: See, the Indian Statistical Institute is a huge place. At that time, the National Sample Survey (NSS) was also part of the Institute. The central thing at the institute was the RTS [Research and Training School] and that’s where all the education and research was being done. C.R. Rao was the head of that. We dealt only with him. Mahalanobis would come on special occasions but not on every day. He didn’t even have an office in our building. His office was always in Amrapali, which is another building.

At the Courant Institute

Then you moved to the Courant Institute of Mathematical Sciences in New York. How were your initial years in the Courant Institute? I read in one of your interviews that you initially wanted to come back to India but then decided to stay back.

SRSV: My initial idea was to go for three years or so and then come back to India. Then I got married after a year, and my wife had just finished her PUC [Pre-University Course] here, after which she joined NYU as an undergraduate. That was a four-year programme which meant that, beyond three years, I had to stay for two more years. But my postdoctoral period had run out—so to stay for two more years I needed to be a faculty member, my visa status had to change…a lot of changes had to take place. Once all that was done, I had been there for five years and then we had our first son. Then it [the stay] sort of rolled over. [Laughs] Somehow, the work there was going well and I was working very well with some colleagues, and so I just didn’t feel like moving from there.

When you went to the Courant Institute as a postdoc, was there a particular set of people there you wanted to work with?

SRSV: I think the area that I wanted to learn was very well represented there. So I knew some of the names but there were others too.

Did you have any personal acquaintance or meeting with Richard Courant himself?

SRSV: Sure. I mean, he had retired by the time I went there. But he was around and I met him socially a few times.

My initial idea was to go [to Courant] for three years or so and then come back to India

And in the general New York area, the Courant Institute included, were these the people you frequently met and interacted with—Mark Kac, Henry McKean, Monroe Donsker, Dan Stroock. Was Eugene Dynkin there too?

SRSV: Dynkin was never there; he was at Cornell University. I met him but not that often. Maybe two or three times a year because Cornell is about 250 miles away.

So it was primarily Kac, McKean and Stroock?

SRSV: Yeah. And Donsker.

How much of an influence was Mark Kac on your research?

SRSV: He had lots of [research] problems, so he would come and tell me, “Here is the problem.” These were challenging problems and one usually had to do a lot of work to solve it.

Did you also talk about what at that time was a just-published paper of his in American Mathematical Monthly, “Can One Hear the Shape of a Drum?”3 Did he speak about isospectral theory?

SRSV: Yes, he talked about that all the time.

Did you also speak to the physicists at New York University? Was Alan Sokal there?

SRSV: Yeah. He was actually in our department for a while. When he was there I would talk to him somewhat regularly, during seminars and so on. But we had other physicists too, occasionally. We had Tom Spencer for years, Michael Aizenman for four years…

Aizenman was also there?

SRSV: Yeah, he was a faculty. Tom Spencer was there and Ingrid Daubechies was there for a while as a visitor. James Glimm was there for a while and I talked with Joel Lebowitz occasionally.

How different was Courant Institute from ISI in terms of academic culture at that time?

SRSV: I think in ISI, the statistics part of it was very good. You had great people like C.R. Rao, S.N. Roy, Sujit K. Mitra and so on. [Debabrata] Basu and Bahadur were both statisticians but knew quite a bit of probability as well. But in terms of current research in probability theory in areas like stochastic processes, diffusion processes, connections with PDEs [partial differential equations] and so on, there was nobody there. But that’s what I wanted to study. I could start studying some of it there, like the work of [Eugene] Dynkin that we started on. I was told that to continue it I will have to go to a place in the US, and the best place for such things was the Courant Institute. That’s why I applied there for a postdoc and was selected. I enjoyed it there very much. The area I was very much interested in, namely, analysis, PDEs and so on, was very strong there, which I guess was missing [at ISI]. Some of it was started in [ISI] Bangalore much later.

Later, you served as Director of the Courant Institute. Did your administrative position influence your academic research?

SRSV: It’s difficult. You see, the administrative position as the Director of Courant Institute does not take much time because you have a lot of staff who help and so on. But how do you measure time? If you measure time in terms of total duration, that’s fine. But that total duration consists of bits of ten minutes that require your attention. Those bits of ten minutes is twenty times a day. [Laughs] So you don’t get a stretch of free time. It’s difficult to concentrate on writing and things like that but you manage to think a little bit, work with students and so on. That’s possible.

You were awarded the Leroy P. Steele Prize for Seminal Contribution to Research, in 1996, for your work on diffusion processes with Daniel Stroock and then the Abel Prize in 2007 for your work on large deviations with Monroe Donsker. Could you tell us more about these fruitful collaborations?

SRSV: When I went to Courant as a postdoc, Dan Stroock was a graduate student at Rockefeller University. He was working with Mark Kac and Henry McKean there. I saw him occasionally, said hello and so on. I had done some work on large deviations during my postdoc years, and at that time Stroock was working on something connected to diffusion processes. We started talking more and then he came to Courant as a postdoc after his Ph.D. We decided to work together on a certain problem, and it was an ambitious project—it took may be four or five years to complete and I think it was an important piece of work. It was a fruitful collaboration.

Regarding my work with Donsker…I think it started because, you know, there is the Feynman–Kac formula which evaluates certain solutions of a PDE as an expectation, and the rate of growth of the solution is the principal eigenvalue just by eigenfunction expansion. So the eigenvalue describes the rate of exponential growth of a function space integral. If the function space integral had a large deviation principle, then this will explain it. The question basically is whether you can find a large deviation principle for the ergodic theorem. The ergodic theorem for independent and identically distributed random variables is what Cramér did. Can you prove a similar thing for Markov chains, or more generally for Markov processes in continuous time? That would lead to this [result] and probably many more.

What attracted you to the particular problems that you worked on?

SRSV: One of the things that I have always liked in mathematics is that there may be several problems related to one another. At some level you see some similarities between them and it’s important to have some kind of a unified approach to these things, so that you can look at them from above and then you see the similarity and all the patterns as coming from one common theme. So if you look at diffusion processes, they can be defined through partial differential equations, they can be defined through semi-groups or solutions of Ito’s equations and so on, and each approach has its own theory of existence and uniqueness. The class of problems each one covers is very different, and there are stopping times and so on that play a role. A problem may be such that you can use one approach in a region and a different approach in another region. You can put these two pieces together and it still works. So is there a unified way via which you can look at these things? That’s what we did when I worked with Stroock.

Work on Large Deviations

When you published your famous work on asymptotic probabilities and PDEs in 1966,1 what were you generally thinking about those days? What was the general stream of thought?

SRSV: At that time there was a student of Donsker, Michael Schilder, who in his thesis had considered Brownian motion with small variance, and proved large deviations for it. But he formulated it as expectations of certain functions, leading to some variational formula. The way he proved it was by approximating with finite-dimensional integrals. For the Gaussian you have explicit densities, and so you can write down the finite-dimensional integrals explicitly. And then it’s a question of justifying the interchange of the two limits, which he justified. Somehow I felt that when you prove an invariance principle, that some things approximate Brownian motion, weak convergence and so on, the main action is in the space of probability measures. That’s where you define weak convergence. And then as a consequence you get integrals that converge. So there is a somewhat similar thing for large deviations. I wanted to define large deviations in terms of probability measures—what does it mean to say that a sequence of measures satisfies a large deviation property? And then to be able to deduce from it that the integrals lead to a variational formula. I did not want to use any finite-dimensional approximations to do this. I wanted it to be a general, abstract result valid in any setting. And that really was the goal of that paper. As an application, I gave an example which perhaps was not the most interesting example, and in retrospect, there were more interesting applications!

You were really then looking at the broad general structure. Did it have some precedent at all from your work at ISI Kolkata? Did you take forward some work or some thought that you had, or was it freshly brewed in Courant?

SRSV: Well, I was familiar with Cramér’s theorem on large deviations. So I knew some of the techniques that they used like tilting measures, using expectations and Chebyshev’s inequality and optimizing, doing the Legendre transform, the connections and so on…I was familiar with it because Bahadur and Ranga Rao had done some joint work on that.

We learn from your work in large deviations that it is very strongly related to ideas and concepts rooted in physics, especially statistical mechanics. Was it a problem from statistical physics that originally inspired you to think about large deviations at all?

SRSV:Well, the first work on the 1966 paper came about because of Schilder’s paper.2 And then I heard a lecture where there was a result due to Zbiegnew Cieselski. If you look at the ratio between the transition probability for a region with Dirichlet boundary data, and the transition probability without Dirichlet boundary data, when does this ratio go to one for all points (x,y) in the region? The result is if and only if the region is convex. The reason for it is that as t goes to zero, if a Brownian path goes from x to y, it is most likely to go on a straight line. So for the ratio to go to one without hitting the boundary, the region should contain a straight line joining any two points in the region—that’s just convexity.

When I heard this result I thought: “What if it’s not just Brownian motion but Brownian motion on a manifold?” Then straight lines should be replaced by geodesics. I wanted to prove such a result and that’s where the next work on large deviations comes from, which says that if you look at a Brownian motion on a manifold and if you look at the transition density for that small t, it just looks like \exp(-\sqrt{d^2/(2t)}), where d is the Riemannian distance. Except I did not state it like that—I did not know much Riemannian geometry at the time, so I stated it in terms of local variables in a flat space.

And this got established via your 1966 paper. Could you give an example of an observable large deviation out there in physical world, something that one could measure?

SRSV: Well, there are many instances of large deviations. There are huge mega lotteries for hundreds of millions of dollars. If somebody wins one twice, that’s a large deviation. In fact, there was one such case and there were complaints that it’s fixed up and somebody calculated that considering the number of tickets sold, the number of lotteries and so on, this could happen in the realm of large deviations. It’s not physics but it’s social science. [Laughs]

If somebody wins a lottery twice, that’s a large deviation

Stock market crashes, tsunamis, disease outbreaks. Would these qualify as large deviations?

SRSV: Probably, yeah.

And since you have lived and worked so closely with Wall Street, how much of large deviation theory has actually percolated to models used by people on the trading floor? People who deal with swaps, options, futures, derivatives? Have large deviations been factored into their models?

SRSV: They are really interested in computing certain numbers in real time. So they want methods that are quick and dirty. [Laughs]

Not necessarily accurate and rigorously proven.

SRSV: Sometimes they use large deviation approximations when the time interval is very small.

Like it happens in algorithmic trading?

SRSV: Yeah, for the approximation of the calculations, they may use large deviation theory. [But] I don’t want to bet based on large deviations! [Laughs]

Going back historically, we associate the names of Bernoulli, Laplace with the classical problem of the gambler’s ruin, or the lucky lottery winner. How does one understand large deviations through the prism of the central limit theorem?

SRSV: In the central limit theorem, you look at sums of n independent random variables, say, with their means being zero. Then it [the sum] is of order \sqrt{n} roughly. If you ask what is the probability that it [the sum] is something times \sqrt{n}, then the limit exists if you normalise suitably. It’s not a trivial limit, and you get the Gaussian distribution. So the probabilities bigger than A \sqrt{n} are like -\exp(A^2/2) to within a constant. That’s true for every finite A. What if A is going to infinity with n? Then both sides go to zero. But does the ratio go to one? For the ratio to go to one, you have to make corrections of various kinds in the right-hand side. It is valid actually to a very large level and these are called moderate deviations. Then they all basically reflect the Gaussian nature. They’re universal in the sense that they don’t depend on the initial distribution that much. But once you go to the range A \times n then the answer is very much dependent on the initial distribution. That’s what large deviations are about. So you have fluctuations which are normal deviations, and then you have the moderate deviations which are slightly larger, and finally you have really large deviations which are of the order n.

In this picture, where does the work of Harald Cramér sit?

SRSV: He looked at the really large deviations for independent, identically distributed random variables on the line.

Is it a fair statement to make that Boltzmann in 1877—who first quantitatively defined entropy—and Einstein in 1905—with his paper on Brownian motion—both had, in some sense, looked at what could be seen as early precursors of large deviations?

SRSV: I am not so sure. I think Boltzmann’s idea was that equipartition of energy gives you the distribution with density and that tells you what the equilibrium distribution is for various systems. That doesn’t tell you much about what the large deviations are. I think the first physicist, to my knowledge, who uses large deviations in some sense is Feynman. He has some huge function space-based integral to calculate. He calculates it by tilting the measure, writing Radon–Nikodym derivative and then using Jensen’s inequality, and then optimising it with respect to the tilt. That’s precisely what you do in large deviations. In his example, he calculates a certain partition function.

You just mentioned the name of Richard Feynman. Where would you place the works of Louis Bachelier and Paul Lévy in this context?

SRSV: They were all limit theorems of various kinds. I think the first work—rigorous work—on large deviations, before Cramér, was done by a Swedish insurance person who looked at, for some point process, claims of insurance where the individual claims were independent random variables. If the insurance firm had certain assets [to meet these claims] he wanted to calculate the probability of the firm going bankrupt. This was done by an actuarian before Cramér and that’s where Cramér got the idea to do the problem in a more general context.

What about Benoit Mandelbrot? He looked at fractals and Lévy walks. But did he not look at large deviations?

SRSV: No.

Would it be correct to say that, when you start to think about a certain problem, you first look at it intuitively and also via principles of physics and that the math only follows much later, after your initial intuition has formed, and when you have a rough idea of where you are heading?

SRSV: It depends on the problem. Some problems have their origins in some physical issue, so the intuition definitely helps. But there are other problems which are perhaps combinatorial in character where an entirely different type of intuition is useful.

But is it really intuition nevertheless that you start off with?

SRSV: Still intuition, yes, but it doesn’t necessarily come from physics.

But it is intuition with which you begin, which you later make precise using the relevant mathematical tools.

SRSV: Yeah.

Connections between Statistical Physics and Large Deviations

I will take a slight detour on these matters and try to bring in a few people whom you have worked with. I’d like to know your thoughts on where your work intersects with that of the Russian mathematicians Ivan Sanov, Mark Freidlin, Alexander D. Wentzell and Roland Dobrushin.

SRSV: I think Sanov’s work is very much related to Cramér’s work and my work. Dobrushin’s work is slightly different.

In what sense?

SRSV: I don’t think he looks at large deviations as much as Gibbs states, how to define them and so on. Freidlin and Wentzell, of course, look at large deviations in a different context. It’s more in a context similar to what I did in 1966 related to stochastic differential equations with small noise, whereas the statistical mechanical connections are different. It’s more about what happens at large times and maybe things like that. But in the end it’s interesting that they can all be unified by just using entropy. In the end, all large deviation issues are really a fight between energy and entropy and that’s a statistical mechanical concept.

In the end, all large deviation issues are really a fight between energy and entropy and that’s a statistical mechanical concept

I was actually going to bring that in. On certain occasions in the past you have mentioned that entropy and energy have been the two dominant themes of your work. So the example you mentioned reinforces this point. But how does one understand the two especially in the context of hydrodynamic scaling which has been a big part of your research work, particularly in collaboration with the Stanford mathematician George C. Papanicolaou?

SRSV: The fight between energy and entropy has to do with equilibrium statistical mechanics. Non-equilibrium statistical mechanics, on the other hand, has to do with change in entropy. There is always a slight difference between a physicist’s entropy and a probabilist’s entropy—there is a difference in sign. So, there is global equilibrium and you start with something that is far away from the global equilibrium and the system evolves towards this global equilibrium. You compute what is called the relative entropy that is relative to the global equilibrium. That quantity is always positive, and the H-theorem says that it’s always decreasing—when it reaches equilibrium, it becomes zero. Now, if you start a macroscopic system, initially, the entropy is proportional to the volume, in some sense, and the entropy per unit volume is one. It is always non-negative, it is always decreasing and your time is very long because the hydrodynamic scaling with time is very long. So the rate of change of entropy has to be very small. And then you prove a separate theorem that says: If, for any system, the rate of change of entropy is very small, it’s locally very close to equilibrium. That tells you the problem of the microscopic averages by their macroscopic expectations in the state that defines the local equilibrium. That gives you the nonlinear PDE to which the system converges to asymptotically. That’s the scope of hydrodynamic scaling.

When Albert Einstein started thinking about the theory of relativity, he is supposed to have imagined shrinking himself to the size of an electron that is travelling nearly at the speed of light and then looked at the universe around him, just so as to inform his own intuition. In your work, a certain picture emerges where you talk about the so-called “perspective of the particle”. Are there similarities in thought and approach?

SRSV: I don’t want to compare myself in any way to others in this matter. [Laughs] You have a particle that’s moving and it’s reacting to its environment, and you want to know, on average, how rapidly it moves. What’s relevant is the ergodic theorem of the environment it sees. So all that matters is to look at the statistics of the environment as seen from the particle, because that’s what the particle knows, and that’s all that there is.

And in the case of a large spatially extended system, a particle looks only at a local neighbourhood.

SRSV: Yes, and it’s changing. You see, you want an equilibrium. Since everything is changing and everything is connected to everything else, and also since it’s not an isolated system, the local equilibrium as seen by the particle is actually determined by the whole big thing around it.

So there is a very close relationship between what happens locally and where the system is going globally.

SRSV: In many examples you can compute it explicitly. And those are the examples which people work out. In the other examples, it is very difficult. Sometimes in some systems, you can reduce it to something else, but in general these are non-reversible systems in some sense and to compute the invariant measure for non-reversible systems is very hard.

We hear of the so-called levels in the theory of large deviations. Intuitively speaking, could you please briefly tell us what these levels are?

SRSV: See, you apply large deviation theory on some space. Cramér’s theory applies to random variables with values on the real line. So you can think of it as the \mathbb{R}^d space. In Sanov’s theorem, on the other hand, the large deviation is on the space of measures of \mathbb{R}^d. Then you can think of large deviations on the space of measure-valued functions on the unit interval.

Reflections

What is your typical work routine—I mean, your day-to-day work routine?

SRSV: I don’t have any day-to-day work routine in the sense that each day is as it comes. There is always free time—children, watching the news on TV, watching a movie that I am interested in. But still there are free times. If it’s a working day, you go to office and you have free time in the office. Or if you get up early in the morning at five o’clock, you have a couple of hours of undisturbed time. Or you take a long shower you have a lot of free time. [Laughs] These are the times which you can use to think. Most of the time you are thinking to see how you can solve a problem. Once you have an idea, you have to do a little calculation to see if it works or not, so that you can quickly determine whether the approach will work or not. Once you decide it works, you have to write it up formally and that’s what takes a lot of time because you have to put a lot of effort in it. Writing a detailed proof has two aspects to it: One is to convince yourself that you do have a proof and the other is to convince others that you do have a proof. These are not the same, so you have to write for both. That takes time.

Writing a proof has two aspects to it: One is to convince yourself that you have a proof and the other is to convince others

What are your reflections on the state of mathematics education and research in India?

SRSV: I think there are some good institutions in India where mathematics is taught well, but they are very few. I think there are lots of new IITs [Indian Institutes of Technology] and they have faculty who teach. Some of them have very good faculty. But I think the ratio of the number of faculty engaged in current research to the number of incoming students is still very low. So you don’t have enough faculty to handle all the teaching at the level you want them to teach. As a result, you do not have a large enough base to generate a large community of active mathematicians in India. That’s my opinion.

How do you keep yourself motivated, in and outside of mathematics?

SRSV: I like sports. I still try to play some tennis once in a while. I like to watch tennis and basketball games. Although I am not an expert, I like to listen to some music. New York is a culturally very active city, so we go to concerts, both Eastern and Western, to see plays on and off Broadway. So these are the outside activities my wife and I love and participate in.

What do you find most rewarding or productive when doing mathematics?

SRSV: You know, mathematics is often like a jigsaw puzzle. You want to show something is true. If showing something is true were easy, it’s not interesting, right? It should be hard. Very difficult. The more difficult it is, the more challenging it is—it leads either to extreme frustration or extreme satisfaction. [Laughs] It’s like a puzzle where you have a few steps you have accomplished, a few lemmas you can prove. In the end it boils down to one or two key steps that you need, and if you can do them, the whole structure is solid. And I think complete satisfaction is when you take those final steps and say “Ah! Now it’s complete.” That’s a big satisfaction.

What guidance would you want to give young students who want to become professional mathematicians?

SRSV: I think it’s important when you are a graduate student that you are preparing yourself by gathering as many tools as you can. Very often students come in and start working with their advisor and then focus on their research, and want to complete their Ph.D.s quickly. And they do it—they do a nice thesis with their advisor and then they are done. That’s not so good, because in the end you’re not going to be following the same path your entire life. You need to branch out and you need to learn other things. It’s very difficult to learn new things as you get older. So when you are a graduate student, and if you are in a place where there are a lot of different courses offered, take them—even if you don’t plan to work in them—and get the information into your head. It may not be immediately useful, but sometimes, surprisingly, some of them will be useful at some later time. So a broad education as a graduate student is, I think, important.

You need to branch out and you need to learn other things. It’s very difficult to learn new things as you get older

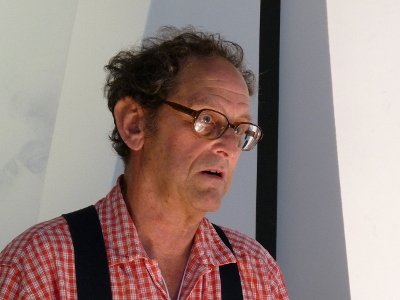

Freeman Dyson, in an article in the Notices of the AMS,4 spoke about the “bird” and the “frog”. The bird represented people who like to take a big picture view of things and the frog, people who obsess over the details. Personally what do you recommend to beginning students?

SRSV: I think you have to earn your rings by being a frog first. You need the experience of the frog in order to understand what you see [as a bird].

It was wonderful talking to you, Prof. Varadhan. Thank you very much for your time.

SRSV: Thank you.

acknowledgment Bhāvanā would like to acknowledge ISI Bangalore where this interview was held, and the assistance of T.S. Sneha in the preparation of its publication.

Footnotes

- S.R.S. Varadhan. Asymptotic Probabilities and Differential Equations. Communications on Pure and Applied Mathematics. 1966. 19(3): 261–286. ↩

- M. Schilder. Some Asymptotic Formulas for Wiener Integrals. Trans. Amer. Math. Soc. 1966. 125: 63–85. ↩

- Mark Kac. Can One Hear the Shape of a Drum?. Am. Math. Monthly. 1966. 73(4): 1–23. ↩

- Freeman Dyson. Birds and Frogs. Notices of the AMS. 2009. 56(2): 212–223. ↩