In an illustrious career marked by numerous accolades, including the esteemed Gödel Prize, the ACM Paris Kanellakis Theory and Practice Award, and the Erdõs Prize, Dinur’s appointment as a permanent faculty member in 2024, in the School of Mathematics of the prestigious Institute for Advanced Study in Princeton, created history – she became the first-ever woman to be accorded this honour in the nearly-century-long history of the institute.

In this exhilarating conversation for Bhāvanā, she speaks extensively and passionately about her early life in Israel, growing up in a family immersed in chemistry, and her journey as a student navigating the thickets of theoretical computer science to emerging as a globally acclaimed pioneer in the areas of probabilistically checkable proofs, error correcting codes, and high-dimensional expanders. Her work stands as a testimony to the adage that there are only few things more practical than a good theory.

Good morning, Irit. We wish you a very warm welcome. Let us start by wishing you Shalom! At the outset, we thank you for your time.

ID: Thank you! Does the name Bhāvanā mean something

Yes, it does. Bhāvanā is a Sanskrit word that was used by ancient Indian algebraists to name a principle of “composition” introduced by Brahmagupta in the seventh century. In his work Brāhma-Sphuṭa-Siddhānta (628 CE), Brahmagupta discusses the problem of finding positive integer solutions to a certain quadratic indeterminate equation that was later called Pell’s equation by Euler, a misnomer as is quite well known. The law of composition that Brahmagupta describes, combining two solutions of this equation to generate a third solution of the same equation, has come to be known as bhāvanā. The name of our magazine bears the imprint of this insightful composition.

Besides its mathematical usage, the word bhāvanā has profound nuances in Sanskrit. Its meanings include “creating’’, “producing’’, “generating’’, and “composing’’, and the word bhāva refers to a high spiritual state.

ID: Wow! Interesting. In Hebrew, there is a similar sounding word “havaná”, which means “understanding”. In Computer Science, I am reminded of a somewhat related operation called polymorphism.

Ah, that’s wonderful to know! So, let us reiterate that it’s an incredible privilege for us to be speaking with you. As always, we start at the very beginning. We would like to know where you were born and also briefly about your family.

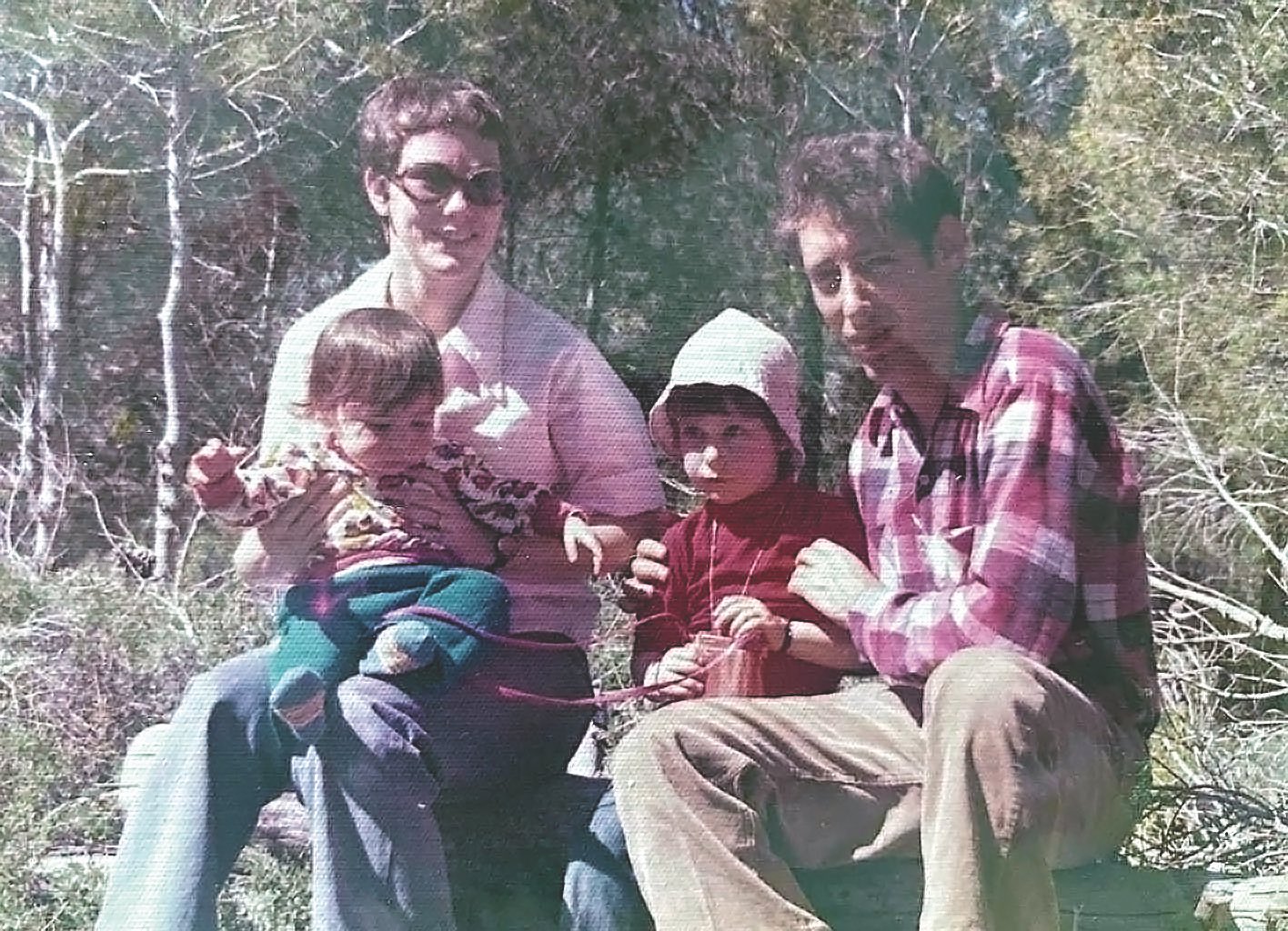

ID: Honestly, it’s a privilege for me as well to meet you all, and talk to you. So thanks for including me. I was born in Jerusalem, Israel. I was the first born. My parents were both students at that time. And I grew up for the first few years of my life in Jerusalem, where my dad was pursuing his PhD in chemistry.

Then we moved around. He went on to a postdoc and then the family settled in the south of Israel, which is a kind of a desert region—very arid, hot, and dry. But Israel is quite small, and this place was still only an hour and a half away from the centre. But still it was somewhat remote, and also a bit provincial.

Now a little bit more about your family. Have they been living in Israel for a long time? Or did they move in from somewhere else?

ID: No, no! My family, like many other families in Israel, immigrated to Israel at some point. So my parents weren’t living there before a certain point in time. My mother was raised in Jerusalem. But her own parents both came from Poland. They came when they were still very young, only in their early twenties, and were revolutionaries traveling from Poland to Israel when it was still somewhat controversial to do that. Their own parents didn’t want them to leave Poland. But then my grandfather snuck out and reached Israel.

My maternal grandmother had received some money from her family to come and study medicine in Israel. But after a year of medical studies in Israel, she realized that it was not for her. And then later she trained to become a nurse, and that is what she did her entire life.

And just a year after she was here, and this was sometime between 1938 and 1939, that is just before the war broke out, she went back to Poland for a summer vacation. And in 1939, the war started in Europe! So she was stuck in Poland for a while. But she had a visa, and so she escaped from Poland; but the rest of her family could not get out of Poland, and sadly didn’t survive. And on my maternal grandfather’s side too there was great tragedy. His Polish family too didn’t make it. And after the war ended, only one of his sisters managed to make it to Israel.

On my maternal grandfather’s side there was a great tragedy. His Polish family didn’t make it

He himself had been fighting against the British as a freedom fighter, trying to get the British to leave Israel. In the middle of all this mayhem, obviously there was no way to contact people. My grandfather’s sister had no idea where he was. There was no internet! So she posted a message on an available radio station announcing that she was looking for a certain Menachem Lewin, a designated terrorist according to the British Empire.

Somehow this idea worked, and she could eventually find him. Both dramatic, and traumatic! That’s my mother’s parents for you.

My father’s parents also came from Europe. But my paternal grandmother was actually born in Israel. Her parents had come a generation before. My grandfather came here from Germany. His family managed to escape before the war started. This by the way is very typical of a lot of people’s families here in Israel.

That’s a remarkable story indeed. So is your surname German?

ID: My surname Dinur is a Hebrew version of my father’s surname Feibischoff. It’s not at all clear where this surname is originally from. It’s somewhat Russian, and somewhat German as well. Anyhow, in the Hebrew tradition, Dinur means “sparks of light”. And it is said that when two wise people interact, it results in metaphorical sparks of illuminating light. That’s the meaning behind my surname Dinur!

Beautiful! Would you like to share anything interesting from your childhood days—especially something that had a strong bearing on your later professional life as a researcher?

ID: Sure. As the oldest grandchild on both sides, I received a lot of attention. Both sets of my grandparents were very excited to witness the next generation arrive, and grow up. So, I was quite pampered. On both sides, my family was very intellectual and greatly valued both learning and scholarship. This emphasis on knowledge is very much a part of the overall Jewish tradition as well. Even though they were not religious, and although I too did not grow up in a religious family, I was still exposed to a strong tradition of respecting the pursuit of knowledge.

On both sides, my family was very intellectual and greatly valued both learning and scholarship

Prioritizing learning and knowledge above material wealth, for instance?

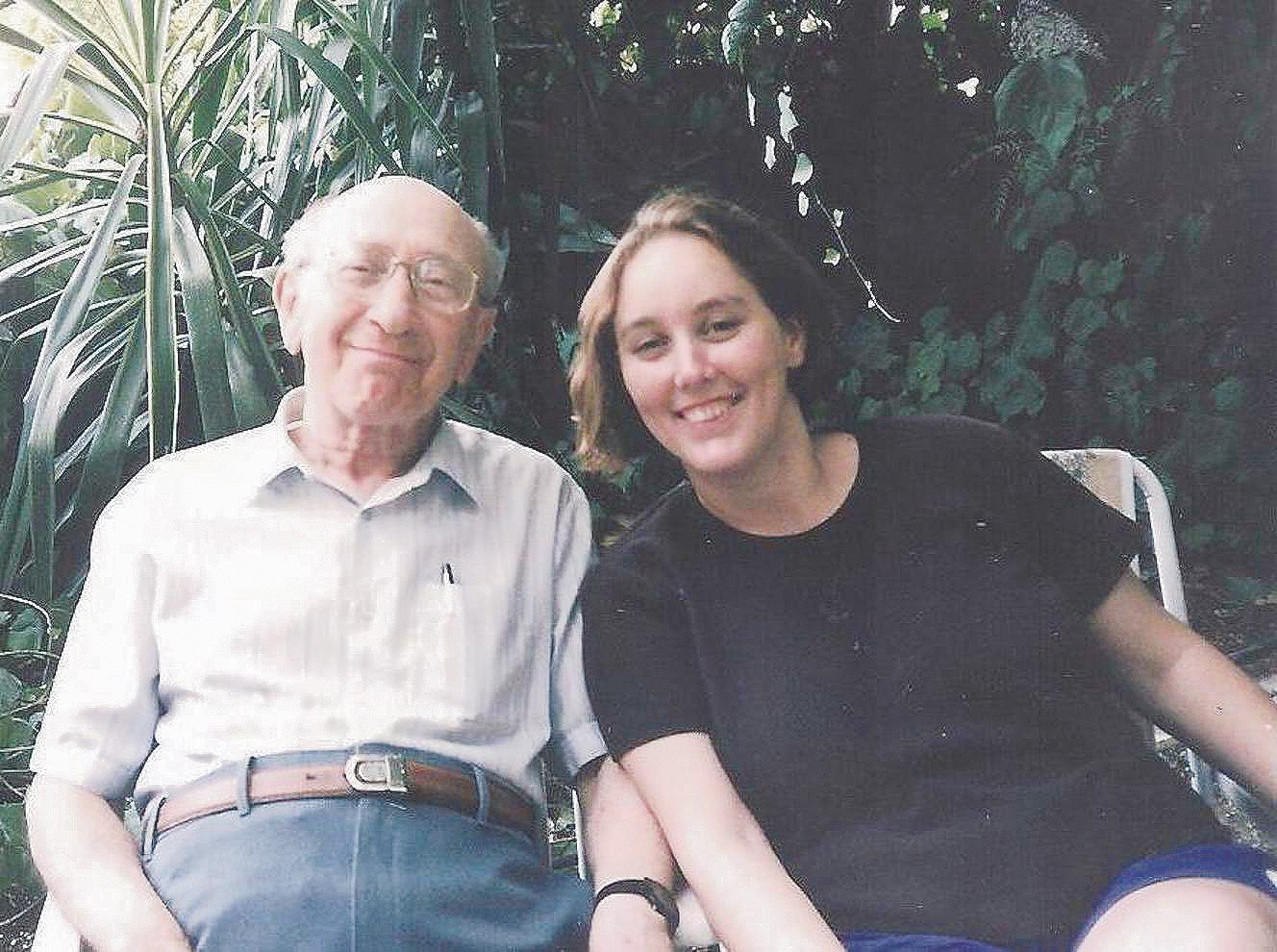

ID: Exactly! My maternal grandfather was a reputed intellectual known worldwide for his work in polymer chemistry.

What was his name?

ID: His name was Menachem Lewin. He had many links with India among other countries. When he was old and during the years that I knew him as an adult, he told me that in his own life he perhaps overemphasized intellectual wealth, and felt that money too was important.

Hmm!

ID: But the most important thing to him was building a strong country. Even before Israel was formed in 1948, he had spent three years in a British prison because he was involved in revolutionary activities. He was politically very active. And simultaneously, scientific pursuit too was very important for him.

All of this happened in Jerusalem and its neighbouring areas?

ID: I guess it was mostly in and around Jerusalem.

Could you now share with us the school that you attended, and the subjects that you found interesting?

ID: I went to a bunch of schools because we moved around a lot, then. When I was a kid, I liked all the subjects. I just liked learning lots of things. But I remember once when elders in the family asked me about what I liked in school. And I told them that I loved math; and that I loved literature too, among other things. I recall with amusement the response I received then. They all went “No, no, no. You just continue to do math”. I reiterated then that I did also love literature. And I just heard the same “No, no, no. Stick to math”! In hindsight, I guess my abilities and aptitude were more evident to the elders in the family than to my own self.

They all went “No, no, no. You just continue to do math”

So your family strongly backed you to do math?

ID: Oh yes. I mean, they wanted me to do whatever I wanted. It so happens that my parents first met when they were both young undergraduate students studying chemistry. My mom went to study chemistry because her father was a renowned professor of chemistry. When I spoke about my interests with my grandfather, he was very clear that he wanted all his offspring to study chemistry. And actually the story in my family goes that he always told them that they could choose to study whatever they wanted, as long as it was chemistry!

That’s a nice anecdote indeed!

ID: To be really fair to him, he gave me the freedom to pursue my own interests. At the same time, I guess my father was aware of an overemphasis on chemistry in the family. So he told me that I should try to do something further and other than the family’s predominant interests at that time.

A big thank you to your father for encouraging you to do mathematics.

ID: Also, I guess growing up as we did in our generation, chemistry wasn’t the biggest thing to pursue. Personally speaking, computer science was so much more compelling.

You are right. Computers were already in the air in the ‘80s. We also had the first generation of microprocessors around. We note that an influential teacher or a mentor who took careful interest during one’s early school days eventually ended up motivating them to pursue a career in science. Did you have any such benevolent mentors from your school days?

ID: In my case, the main thing was that I already was lucky to be born into a highly academic family. My father and grandfather were both professors of chemistry. So a fairly well defined career path was readily available for me. Also, I did not have a teacher who mentored me or played an influential role. My own family itself had made the pursuit of science attractive for me.

the most important thing to my maternal grandfather was building a strong country

That is a real blessing!

ID: Yes, In fact around the time that I finished my undergraduate studies, there was a “big” high-tech boom. It feels ridiculous to say it now, but that was the prevailing sentiment around that time. And all of my friends landed jobs that paid good money. I was also tempted, and so I went out and got myself a programmer’s job. But on the other hand I saw how my family was very disappointed with my decision. Incidentally, in just about a year I was bored, and did not enjoy what I was doing.

You didn’t like what you were doing?

ID: Maybe I did not choose the right place to work. I chose a place that paid the most money, and I’m not sure that was the wisest criterion.

My own family itself had made the pursuit of science attractive for me

Was it a regular programmer’s job?

ID: Yeah, it was just a programmer’s job.

Going back a little bit in time, which of these adjectives would describe you better while you were in high school? Were you gregarious and curious; or, serious and focused?

ID: I was certainly focused. I don’t think I was serious, but I definitely liked to study and learn a lot of things that interested me. My high school experience was quite bad. I hate to admit it but it is the truth.

Oh! Around that time in people’s lives not only is one’s mind expanding, but the body’s also changing. There’s teenage angst to manage, while experiencing a burst of youthful energy. The perfect recipe for chaos.

ID: True! For me, there was something in addition to all of this. At that time, Israel was more socialistic, which I think is nice in itself. And the authorities had this idea that they called integration, wherein they took people from various neighborhoods and sent them to schools, so that they mixed well together. It was also a response to the fact that Israel was an immigrant country, with people coming in from all over the world. Therefore the authorities wanted people to mix, and get to know each other well. But for me, it was not a good experience.

Oh, yes! It was an absolutely amazing first experience

I had grown up in a rather nice neighborhood. My dad was a professor, and we therefore enjoyed a kind of privileged life. Now, all such privileged kids were bunched together and dispatched to a neighborhood that was rather modest.

I see.

ID: The idea was that integration would happen better and faster in such an environment. But the school I was assigned was totally run down, and it also had a lot of violence.

Oh dear!

ID: I therefore started cutting classes, refused to participate in academic activities, and simply ended up not enjoying the whole experience. On the other hand, I guess this experience also made me internally more resilient.

Hmm… you mentioned that you moved around a lot during your early days. Was it mostly inside Israel?

ID: Not really. We spent two years in the USA when I was in my first and second grade. My dad was awarded a postdoctoral position at Harvard. So we lived in the Boston area for some time. I even went to school there, which I still really remember quite vividly. I really enjoyed that part.

Nice!

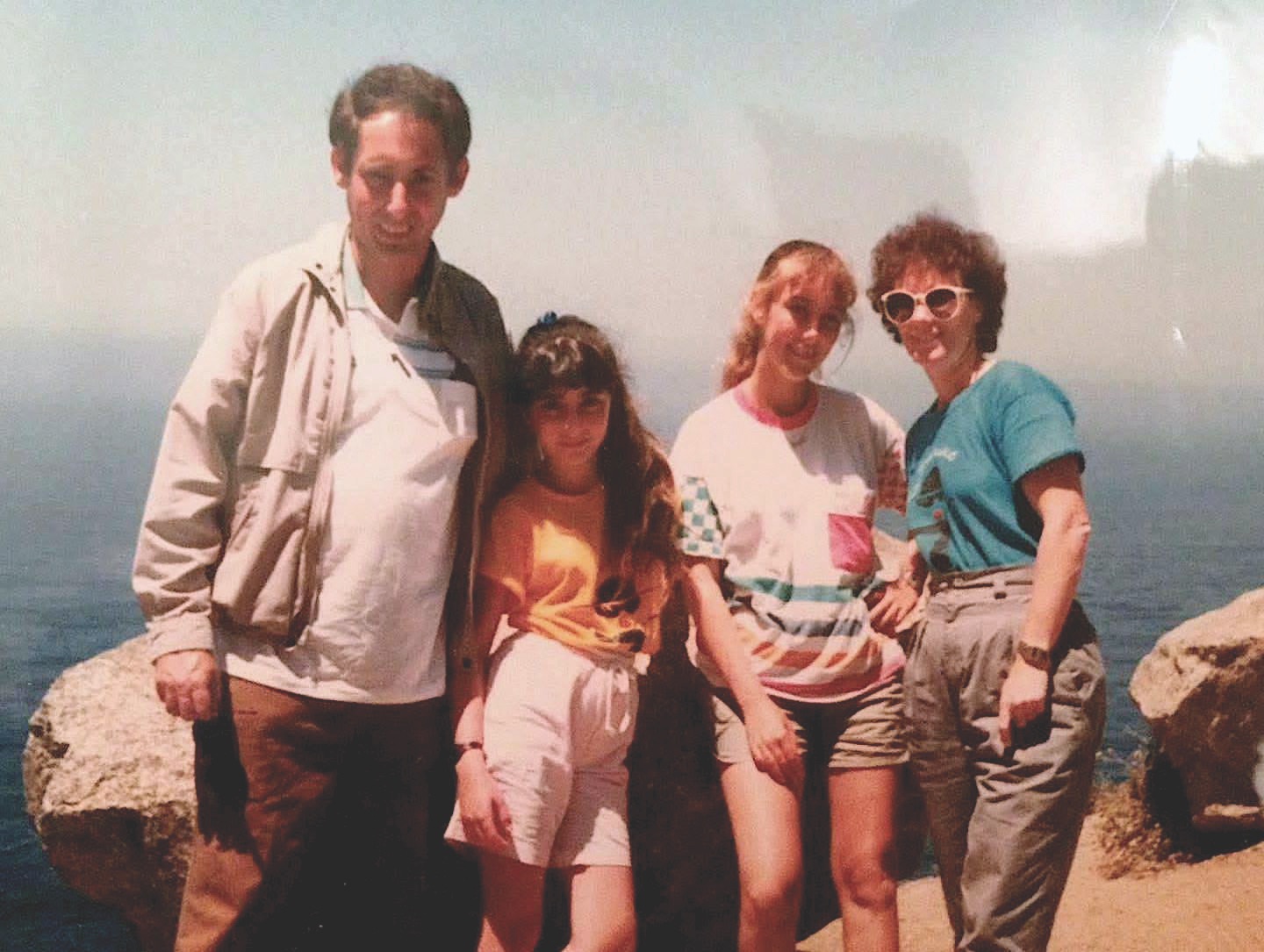

ID: We then came back to Israel, to Be’er Sheva. It’s a desert region. And when I was in eighth and ninth grade, we again went back to the USA, where my father was headed to spend his sabbatical. This time we went to California.

During your school days, when was the very first time you saw and used a computer? Was it love at first sight?

ID: Oh, yes! It was an absolutely amazing first experience. The first time I saw a computer was when we were in the US, and when I was perhaps still in second grade. We had gone out to meet some friends of my grandfather. And the family we went to meet had just then bought a computer that you hooked up to a television screen. And that was just amazing. Our host also showed us how to programme the computer in Basic – say you write 10, and then you write some command. And I kept wondering to myself how one could learn to do that! It was a crazily wonderful experience. But the machine was way, way too expensive. There was no chance in the world that my family would have been able to afford such a thing. And indeed, it took our family another few years before my dad brought home a computer. And this was when we were back home in Israel.

Also in Israel, we had a neighbour across the street. His parents were quite well to do, and they bought a computer before we had one. So he and I would play with that computer a lot. We learnt how to program. We also wanted to write our own computer games, but we simply couldn’t figure out how that could be done. I was quickly convinced then that this was really the most amazing thing in the world.

Was this in the early ‘80s?

ID: 84–86 possibly.

Wow! That early encounter, looking back with hindsight, proved to be hugely consequential in your later life. As a student, when was the first time that you felt that computer science was perhaps not a bad career option after all?

ID: When I was about to join my undergraduate course, I wanted to do math. That was the thing that I felt was the most interesting and intellectually appealing for me. But computer science was much harder to secure admission to. One really needed pretty high scores to get into computer science. And luckily, I did have those high scores. So I thought, “Why don’t I do computer science”? I started my undergrad studies and I learned things like discrete mathematics and data structures.

Those were also some of the most amazing courses that I took back then. And in the final year, I took a course on computational complexity. This course simply blew me away and turned out to be a decisive event in my life. We learnt a lot of NP completeness reductions, in addition to other things in complexity. I don’t know really why I was so deeply impressed by this subject. There was another course from those years that I liked a lot. It was Topology. So, I went to the professors of both these two topics, and I told them that I loved the course. What could I do next? And the computational complexity professor told me that he himself felt that it was a dead subject, and that it was not really headed anywhere. But he also said “You know what, a newly appointed young researcher is joining the faculty next year. Why don’t you go and talk to him even though the area is almost finished”

computational complexity course simply blew me away and turned out to be a decisive event in my life

Oh goodness! Did all this happen in the Hebrew University of Jerusalem?

ID: In TAU [Tel Aviv University], where I did my undergrad studies. And in the very next year Muli Safra, who later would end up being my PhD advisor, came in and joined the faculty. I went to meet him and remarkably, I got a wholly different perspective and view of the field of computational complexity. Most importantly, Muli Safra told me that the field, far from dying, was very much alive and kicking with exciting new developments.

Incredible story! Somebody speaking to you from a position of authority advised you to avoid the field, but you still stuck to your passion. And more importantly, you made that passion count. There is a big message in this for young people. Pursue what you love with all that you have!

ID: Yes, you should! Also while deeply involved in it to this day, I haven’t ever felt that I have gone against the grain. But these experiences kind of stay in one’s mind. There was also one other incident involving a senior academic whose name I do not want to mention. He was then a dean at Columbia University in New York, and also a friend of my parents. And he told my parents that while it was great that their daughter was doing computer science, they would do well to advise her to stay away from Theoretical Computer Science (TCS).

Oh!

ID: Around the same time, there were a lot of theoreticians working in Israeli universities. And in America, it was a time where there was a big push against theory. It’s well known that there were public debates on this topic, and NSF had even slashed their budget for funding research in theoretical computer science. Apparently, he was aware of that and told my parents that theory was really on the decline. “Just keep her away from theory”! But it didn’t work out that way. And on the contrary, things have worked out just fine for me!

Did your parents convey this message to you to stay away from theory?

ID: My parents trusted me. They told me the whole thing, but they also knew that I would do whatever I felt was good for me.

Amazing! Well, just for the record, did you also consider alternate career options before starting undergrad education? You mentioned earlier that mathematics was another serious option that you had in mind back then.

ID: Mathematics was certainly another serious option. In my own career thus far, I have always kind of leaned towards maths. That is because maths is something that I really love. So I don’t think it has ever been two different paths. Even while working in theoretical computer science, I have been able to blend math and computer science. I see a lot of unity in the two disciplines.

maths is something that I really love

That’s nice. Revisiting those early days of your student life, was there a person, or even an idea situated in the broad arena of science that inspired you passionately?

ID: Later in life when I was an undergrad, I saw, even though from a distance, an unmistakable sense of camaraderie and bonhomie evident especially in the community of people studying complexity theory. A lot of people there studied pseudorandomness, and this was a highly influential group of people. Avi Wigderson, Oded Goldreich, Shafi Goldwasser—all these people seemed to have such a great time doing what they loved doing. They were just having a ball, really. They absolutely loved what they were doing. You could see it, and feel it.

Oded Goldreich was at Weizmann at the time. Avi Wigderson was in the Hebrew University in Jerusalem. And they’re all very friendly with each other. Always witty and enjoying each other’s company, while enjoying their research; and doing such conceptually amazing work that they are all known for. For instance, thinking about what randomness really means. And what role it plays, say in cryptography. Also, at a deeper level, what does it really mean to prove something? And for me personally, I thought it was simply amazing. Here were a bunch of world class researchers who were thinking about deeply philosophical things, and simultaneously having such a great time. This was just what I myself was passionately seeking – I felt.

Truly inspiring! Also, can you recall people whose works you studied during those days, and which struck you as being truly noteworthy vis-a-vis both insight and lucidity?

ID: Yes! I straightaway recall the classic paper with an interesting title by Adi Shamir, Ron Rivest and Len Adleman in 1979, and which was about playing mental poker over the phone. Such papers had a really strong impact on me, and were also written using surprisingly basic and relatively simple techniques.

Later in 1982, Shafi Goldwasser and Silvio Micali took this idea further on how one could play mental poker,1 while ensuring the secrecy of certain information. The greatness of these papers was that they used simple ideas that involved only say playing a game of cards—even while motivating novel and powerful ideas. I think this combination of employing simple examples and yet conveying deeply consequential ideas was what I found highly compelling.

Indeed! In hindsight, even though the building blocks of these ideas appear so elementary, the consequences that these advances ended up creating are truly staggering.

ID: Well, we didn’t really know that at the time, right? For instance, zero knowledge had no applications whatsoever in the early days. It was a very interesting idea indeed, but there was no way people could have imagined that it would have serious applications. Now though, I am aware of the fact that there are multiple startups that are implementing these ideas. But these possibilities were not at all obvious, and not even on the horizon back then.

Actually, I remember seeing an article about Shafi Goldwasser featured in an Israeli women’s magazine that usually focused on beauty and similar topics. This article was in Hebrew and was about Shafi Goldwasser and her work in zero knowledge.

Wonderful. So zero knowledge had already become part of local culture. I mean, if it could be published in a women’s magazine!

ID: Yeah! It had made it to popular culture.

You mentioned these influential and personally decisive courses that you took, especially topology and computational complexity. Is there anything else that you think was a significant event from those days?

ID: After high school, I served in the military for two years as part of compulsory military service, which was boring and felt like a waste of time. So, when I later went to do my undergrad, it was as if the world had suddenly opened up for me. Suddenly I could study math all day long, and also was not even expected to do anything else. It was as though somebody wanted me to do exactly what I myself loved doing! It was a fantastic experience, especially coming as it did after years in the military.

While you were conscripted, could you still take some time out for study? Or, was it military service all the time?

ID: Yes! I could take some courses after my working hours, which I also did a bit. But the environment was not very conducive for study and research, at least where I was posted. It was not a combat zone, but more of an office job, which I found very mundane and uninteresting.

Coming to your choice of Tel Aviv University for undergrad education. Did you consider Weizmann, Hebrew University of Jerusalem, Technion, or other universities?

ID: I made my decision simply based on where I wanted to live during those years. I did not put in too much thought. And I kind of just went with the flow of things. I did not plan to look far ahead. In fact, I was in a similar state of mind when I went for my PhD as well.

At Weizmann, there were all these really cool people that I have already mentioned – Shafi Goldwasser, Adi Shamir, and Oded Goldreich. So I knew that Weizmann was very strong in Computer Science. I even went there once just to look around, but the fact of the matter is that I felt intimidated by the place. So, I came back thinking that I should just continue to stay and work where I already was.

I didn’t have the confidence to explore things and then make an informed choice in a way that I see students operating today. Nowadays students are very knowledgeable about their options, and they have the confidence to pick and choose. But, I was way too shy and diffident! I remember at some point during my PhD, I became very interested in some of the things that Avi Wigderson was pursuing. And someone suggested to me that I should just go and talk to him. Instead of approaching him, I convinced myself that he would never want to talk to someone like me. That is how shy I was. Today, I wish someone had talked to me back then, and helped me rid myself of my shyness, and urged me to be a little more open. It took me considerable time to get over my shyness.

I became very interested in some of the things that Avi Wigderson was pursuing

We now move on to more technical things from here. You’re known for your insightful work in the area of probabilistically checkable proofs (PCP). What exactly is a PCP?

ID: Sure. PCP stands for a `Probabilistically Checkable Proof’, as you mentioned. When it was developed, it was a revolutionary way to think about the very notion of proofs. In those days, people were developing novel notions in cryptography, including how to convince another individual about the truth of a certain statement. Suppose you have two parties that are required to interact harmoniously with each other, it becomes imperative that both the parties agree on what even the notion of a proof is. Here the early pioneers realized that randomness and interaction could play a huge role in the construction of novel kinds of proofs. And that’s how interactive proofs were developed. And PCPs were a kind of a proof where one doesn’t have interaction, and where a prover writes down the proof.

Okay.

ID: But the difference lies in the nature of the verifier – that is, the person who checks the correctness of the proof. The verifier is here no longer constrained to work purely deterministically, but rather can now also employ randomness. And one can therefore immediately ask, “What advantage does randomness provide to the verifier?” And the answer to that question coming from the subject of PCPs is that instead of carefully reading every word in every line of what could very well be an extremely long proof, the verifier can randomly read just a little bit, or perhaps only a little part of an otherwise entire long proof; and yet, miraculously, still arrive with high probability, at the correct conclusion!

It took me considerable time to get over my shyness

Really?

ID: Yes, and it’s even more amazing because we usually think of proofs as being very brittle. Think of a very long geometrical proof where you have axioms and rules of derivation; and where every inference at every step is the logical cumulative outcome of all of its previous steps. These proofs have a very linear structure. Suppose that there is a bug somewhere quite early in what is actually a long proof containing say, a thousand lines. Let us introduce a bug or an error somewhere in the proof, say in line 25. Now even though each of the successive individual statements of the proof appearing further downstream of where the bug is introduced are valid and correct in themselves, the final conclusion or inference that will be drawn from the full proof will very likely turn out to be wrong.

Exactly.

ID: In a PCP though, this possibility cannot happen. The proof is designed to remain so robust that even if the verifier reads perhaps even just a little bit of the proof in accordance with a distribution that the PCP specifies, one can still be convinced that there is a very high chance that the proof is correct.

This is really bordering on the fantastic! It’s both non-obvious and non-trivial at the same time. Is a concrete manifestation of this idea available?

ID: Yes! It is a celebrated theorem proven in the early 90s in two papers, the first2 by Sanjeev Arora and Muli Safra; and the second3 by Sanjeev Arora, Carsten Lund, Rajeev Motwani, Madhu Sudan and Mario Szegedy. I want to add that it is also seen as an amazing theorem because people didn’t really believe that such a stunningly bold claim could actually turn out to be true. I think that an initial sense of disbelief in people’s minds which later gets convincingly transformed to a pleasant surprise, is personally speaking part of the subject’s magical allure. Pioneers of this subject originally came up with some theorems without necessarily thinking of their potential applications for the concrete world. They just thought about these ideas abstractly – only wondering if this thing could even be true at all. And miraculously, their early intuition and hunch eventually turned out to be true.

Wow!

ID: This gets even more amazing. A parallel development that took place at roughly the same time around which the PCP theorem itself was conceived, was in the area of approximation algorithms. People were trying to understand the limits to which certain optimization problems could be solved. It was known that finding optimal solutions to some of those problems was, computationally speaking, NP-hard. Yet they hoped that perhaps it was not so hard after all to compute reasonably good approximate solutions to optimisation problems. For example, if one takes a graph, and it is now required to find the size of the largest clique of the graph. This exercise was known to be NP-hard.

Okay!

ID: So the following question was posed. Can one find a good enough approximate solution to the problem of the largest clique? Further, can one find a clique that’s half the size, or 0.1 times that of the size of the largest clique? Uriel Feige and Laszlo Lovasz were working on this problem, trying to find an approximation algorithm for the largest clique.

And coincidentally, these two research directions came together in this famous work of FGLSS,4 where they showed that the PCP theorem implied that it was not just NP-hard to compute the largest clique `exactly’, but also equally hard to compute even a reasonable `approximation’ of the same. It must be noted that at that time what we now call the PCP theorem hadn’t even been proved yet! They had much weaker theorems in those days. But they showed that PCPs provided an amazing bridge between two distinct areas of study that outwardly appeared to be unconnected. The lesson coming out of this was that PCPs had a big role to play in understanding the hardness of approximation.

That’s a great synthesis of ideas which superficially appeared to be disjoint and unrelated to each other, but were mysteriously connected via hidden and non-obvious bridges.

ID: Yes! Hardness of approximation is now a highly developed and rich area of study. These days, especially since the last decade, I want to add that a surprising and exciting novel development is that PCPs are now used in practical applications such as speeding up blockchain computations, thus making them more efficient.

That’s very interesting! Going back a little bit to the time when you started off on your research on PCP, what was the then prevailing state of affairs in its study? Also, what prompted you to start thinking of an approach different from the then prevalent approach?

ID: When I was a grad student, my advisor was Muli Safra and who was also one of the pioneers of the PCP theorem. Muli was one of the authors in the FGLSS paper. The PCP theorem, as I mentioned earlier, is also attributed to a jointly authored paper by Sanjeev Arora and Muli Safra together with the ALMSS5 paper. Muli was certainly one of the leaders pushing PCPs forward, and so naturally I went to him to study that.

Back then, the PCP theorem appeared to me to be highly complicated. The proof consists of several different parts, and during my PhD I slowly understood all of these parts. I wrote papers that built on earlier work, and so I got to learn all the things involved quite well. Yet, a lot of the steps involved seemed almost a bit too magical in how they worked, and also were finally put together. By magical, I mean `hard to believe’! Crucially, I knew for instance “how” to prove that they worked. But I didn’t know “why” it worked. For instance, they use low-degree polynomials which are in themselves marvelous objects. They have so many nice properties that when you are using say a particular low-degree polynomial, you don’t really know which among those nice properties is it that you are really benefitting from.

a lot of the steps involved in PCP theorem seemed almost a bit too magical in how they worked

Hmm!

ID: Eventually, I went to IAS in Princeton as a postdoc. When you go to a different place and away from your advisor, you start thinking about things more independently, and by yourself. I had already by then realized that I didn’t understand why the PCP theorem really worked. I was confident that, metaphorically speaking, I had already sort of understood how all the moving pieces came together, and meshed with each other. But you realize that at a deeper level, you’re not really happy with your present understanding. Why were certain things the way they were? Was an alternate approach feasible? And then one day I was talking to Omer Reingold, who was also a postdoc there, and a little older than me. And we decided to think about it from scratch, purely as an intellectual endeavor. Let’s try to think of other ways to do it, we told ourselves.

Which year was this in?

ID: This was in 2002 and 2003. And we set off on this exercise as a purely intellectual endeavor, without setting ourselves a specific goal. We wanted to come up with different proofs, thus enhancing and refining our current rather inadequate understanding. By the way, we did find different approaches to proving the PCP theorem. We came up with a notion called an Assignment Tester, which was also incidentally discovered in parallel by another group of people who called it the `PCP of Proximity’. And that name has now indeed become the standard terminology to this day. We managed to construct tensor-based proofs of the PCP theorem, but even this still didn’t quite prove the PCP theorem. We had weaker parameters I think, but still it was an important step ahead for me.

I knew for instance “how” to prove that they worked. But I didn’t know “why” it worked

I later came back to Israel after my postdoctoral work at IAS, and took up a position as a young member of the faculty of Computer Science at HUJI (The Hebrew University of Jerusalem). I also taught my first course on PCPs there. While teaching this course, I once again felt frustrated that we were teaching our students a bunch of `magical’ constructions! And further, I again strongly felt that I really did not understand why we were doing these constructions at all! Was there a good reason to do it only this way, and not via some other way? And that’s how I started thinking about this idea of a `gap amplification’ during that semester. And then we had a semester break. When you are teaching a course, you are so engrossed in it, and then suddenly you have the semester break. All of a sudden, you have so much time for yourself, and you’re also so energized by the students. The course was so very energizing for me.

Hmm!

ID: So in the break, I worked on this and came up with the rather lovely idea of gap amplification. Looking at it, I felt very happy with myself.

So is this the genesis of your intellectual angst that eventually led to the breakthrough discovery?

ID: Precisely!

That is a great story! Can you provide a synthesis of both a panoramic perspective, and the key details involved therein—thus metaphorically combining both the eagle’s and the worm’s views ?

ID: A very poetic question! I have addressed some of this already. Additionally, I want to mention one more thing that I feel played a key role here. In my last year of postdoctoral studies, I was working as a Miller Fellow at UC Berkeley. And my mentor there was the (late) Luca Trevisan. And in the last summer that I spent there before I headed back to Israel, Luca had a grant together with Salil Vadhan and Omer Reingold. So they both came to visit the San Francisco area. And all four of us would get out and talk about research ideas. We were really interested in a question which is incidentally still open to this day, “RL = L”.6 This is to decide whether log space with randomness can be simulated by deterministic log space.

Very interesting, yes!

ID: So we worked on that, and we talked about various kinds of randomizations, walks on expanders, and so on. That’s when one morning I reached a local coffee shop where we were supposed to meet, and I saw that Omer and Luca were already there, just sitting quietly. I asked Luca what was going on, and then Luca told me “Oh, Omer just proved that SL = L”!7 Of course, I thought they were playing a joke. But then Luca showed me the proof that Omer had come up with, literally overnight.

Wow!

ID: We had all met just a day earlier, and then we were meeting again the very next day—by which time Omer now had a ready proof! It was quite simply amazing, and we immediately got into the details of his proof—trying to understand walks on expanders, and how one did aggregation in the so-called zig-zag product. This got me thinking deeply about the power available with this kind of a recursive structure, and also in walks on expanders.

You mean random walks on expanders?

ID: Yes! Also, seen in an intuitive but inevitably imprecise way, one executes two different kinds of random walks in accordance with the two moves of the `zig-zag product’. Here, the first move involves a random walk that essentially happens `inside’ a precisely defined local region of a certain graph—this is the zig; the second step involves a random walk that is longer than the zig, but extends to regions that lie `outside’ the earlier locally defined region—this is the zag. To wit, a local zig is immediately followed by a non-local zag. One essentially keeps repeating these two alternating steps, recursively. This is the essence of the paper by Omer Reingold, Salil Vadhan and Avi Wigderson published in the Annals of Mathematics in 20028 on the celebrated construction of constant-degree expander graphs, employing zig-zag products.

Very interesting!

ID: In my earlier work with Omer on building combinatorial PCPs, we had different kinds of composition steps that had given us some results, but we did not have very good parameters then. So perhaps even without realizing it, I was influenced by these two things. I kept trying to think of a recursion in a form either as it appears in the SL = L proof, or, as it occurs in the zig-zag product. The zig-zag idea was developed somewhere around the year 2000 and later published in 2002. During those very days, I was interacting a lot with these very people, and obviously got influenced by their ideas. So, I wondered whether a composition occurring in a proof with somewhat comparable structure could also be constructed for PCPs.

Wow! This amazing story that you have just narrated about what transpired in that Berkeley coffee house will stay in our minds for a long time.

ID: It is almost unbelievable! Except that it is a true story, and I remember every bit of it so vividly.

You employ a notion known as influence to great effect in your treatment of the hardness of approximation. Equally intriguing here is the appearance of Fourier analysis in what is essentially a discrete setting. Can you please elucidate the idea of influence? Also on how Fourier analysis, traditionally used to study continuous phenomena, is now employed in the novel context of Boolean functions—a canonically discrete setting?

ID: For the record, my work on gap amplification was published in the Journal of the ACM.9 My earlier work from my PhD days was about the hardness of approximation of vertex cover, and this work was published in the Annals of Mathematics in 200510 with my PhD thesis advisor Muli Safra as a co-author. In that work on the hardness of vertex cover, we use a certain notion of influence which comes to us from the analysis of Boolean functions.

Even before that, Muli Safra and I had written a paper titled “The importance of being biased”11 back in 2002. A couple of years before that, there was a breakthrough sequence of two works by Johan Hastad12 proving optimal hardness of approximation for some very important problems, one among which was 3SAT. If you’re given a 3SAT instance with a bunch of 3CNF clauses and asked whether this is satisfiable or not, such an exercise is known to be NP-hard. A further allied question that arises is when now you’re guaranteed a priori that it’s indeed satisfiable. In this guaranteed situation, how many clauses can one satisfy?

He also proved a bunch of other nice results. I’m just mentioning this one because I think it’s in any case already amazing to even show that random assignment is the best possible option. His results turned out to be doubly amazing: first, for the insightful results; and secondly, for the techniques he employed to get there.

Relevantly, this was also the first instance where the Fourier analysis of Boolean functions was applied to the area of hardness of approximation. In what was then a pioneering idea, Hastad represented functions in their Fourier basis. This was very surprising for us since we didn’t understand this stuff at all. It looked pretty complicated, and one could not easily enter these areas. And it took us close to two years or so just to get a grip on these ideas; but when you’re young, two years is a lot of time.

Anyway Muli Safra, my advisor, was convinced about the importance of this approach; and insisted that we should understand it quite well. There were experts in that area like Gil Kalai and Ehud Friedgut, who were luckily both fellow colleagues in HUJI; and I knew that Muli was friends with them.

when you’re young, two years is a lot of time

Very interesting!

ID: Kalai and Friedgut had already developed ideas that were even more advanced than the notion of influence of variables on a Boolean function. Here is a brief overview of what is going on. It starts from thinking of a function which has say n Boolean variables. So, each variable is either a zero or a one. Say, somewhat like a yes or a no. The Boolean function itself is a kind of an aggregation of all the yes and no answers of all the variables, and decides whether to finally output either a collective yes, or a collective no. And then a natural question that arises here focuses on every single one, that is each of the n variables that the function as a whole depends upon. For instance, consider only the i-th variable for a start. One can now ask “How much influence does this chosen variable alone exert on the final output of the function itself”? To make things really simple, let us start with every other variable staying fixed, and letting only the chosen i-th variable change its value. The thing to check now is whether this lone change in the input ended up influencing the total outcome, or not. That in a nutshell, is the notion of influence.

It turns out that there’s a nice mathematical expression for influence in terms of the Fourier coefficients of the function; and somehow miraculously, this in turn can be used to analyze reductions or constructions that one employs while trying to prove hardness of approximation. This idea was used in a rather sophisticated and novel way in our own paper on the hardness of vertex cover. Let me also add that in our paper on the hardness of approximating the vertex cover, we studied more advanced Fourier analytical ideas such as biased distributions, and average noise sensitivity—advanced concepts from the area of analysis of Boolean functions.

I see!

ID: Later on, there were papers that pushed the envelope even further. I have not been a part of these later advances, but certainly admire them from the sidelines. For instance, the paper of Subhash Khot on the Unique Games Conjecture. This work allowed us to separate out the label cover component, from the combinatorial component. The label cover component was “conjectured away” by Khot’s Unique Games Conjecture, and this allowed focus to shift towards the combinatorial component, which is all about analysis of Boolean functions. This gave rise to many exciting results and a rich theory, as seen in Ryan O’Donnell’s book Analysis of Boolean Functions.

For example, the paper by (KKMO) Subhash Khot, Guy Kindler, Elchanan Mossel and Ryan O’Donnell came out where they had a conjecture on Boolean functions which implied hardness for Max Cut using the Unique Games Conjecture. This then led to a proof of the Invariance Principle which is now both a very important, and a foundational theorem in the analysis of Boolean functions. Prasad Raghavendra took this even further and showed that it implies optimal hardness for every Constraint Satisfaction Problem. So this area has really developed and evolved way beyond our paper on vertex cover.

Superbly put! Thank you for both the panoramic and a well curated view of the whole area! Via your contributions, you also built a bridge between what was till then two disparate areas of computer science.

ID: Maybe I did! Which also led me to things that I have been working on, since the past decade or so. The ideas that connected expanders to PCPs was a relatively novel advance back then in my early work on gap amplification. But since then, it has evolved to forge even more stronger and illuminating connections leading to our recent works on high dimensional expanders.

Could you please elaborate on this nontrivial development?

ID: I will first tell you how I personally see it in my own mind, perhaps a bit philosophically speaking. It’s about the appreciation that we have for the proof of the PCP theorem. It appears to me to be an amazingly beautiful, yet solitary diamond shining in the dark. But at the same time, we simply do not know where on earth it came from. Or, did it just simply drop down from the sky? I have sometimes felt that it was not connected to anything concrete while still situated amidst a whole variety of potentially rich intellectual possibilities. Our work on high dimensional expanders is another similar situation. This work too, I feel, appears to be like another solitary shining diamond. But luckily here, it has slowly started to yield some of its secrets – throwing light and pointing to a more cohesive area of underpinning knowledge, where one can see the various threads of ideas manifesting in manifold ways. So, the situation here is slightly richer and also more versatile. We’re not fully there yet, but it is my desire and fond hope that we will get there soon.

It appears to me to be an amazingly beautiful, yet solitary diamond shining in the dark

Beautifully put! We loved the imagery that your words ended up creating in our own minds. In an interesting coincidence, the motif that we have chosen for this very mathematical magazine Bhāvanā is also an expander. What we have chosen is actually a stylized version of a Ramanujan graph.

ID: That’s a pleasant surprise!

Expander graphs are known to possess interesting and useful combinatorial properties. Could you please elucidate how these properties are used in your own work?

ID: Sure. Certain types of expander graphs are known as Ramanujan graphs, in honour of the great Indian mathematician. What makes them special is that they are optimal expanders; and even though they are finite objects, they function like an infinite tree.

Ramanujan graphs have an optimal property in terms of their spectral gap. One way to see it is that even though it is different from the infinite d-regular tree, this finite graph imitates something that goes on in the infinite tree. When one looks at a random walk on the infinite tree, it always reaches new places as fast as possible. In an expander graph, or also in a Ramanujan graph, a similar property is seen to occur, even though it’s finite. This seems particularly paradoxical because when you’re in a finite object, you’re in a space that is very different from one that keeps growing without a bound.

Yes.

ID: Now in the case of a PCP, if at all there is any error or a bug anywhere in the proof, you would ideally want its effect to spread and propagate in all directions, and as quickly as possible. This is desirable so that the error can be detected no matter which random region the verifier chooses to inspect in order to verify the correctness of the proof. This not-so-obvious similarity in the fast propagation of errors is what connects PCPs and Ramanujan graphs; and that’s how these two conceptually and functionally very different things come together, and get forged. In a PCP, one would like to ensure the spread of errors as fast as they possibly could, ideally to everywhere in the proof.

even though they are finite objects, they function like an infinite tree

So that its detection is done at the earliest. Is that the idea?

ID: Yes! You want to allow for both maximum detection, and maximum early detection – to the fullest extent possible. I want to add something more about Ramanujan. There are objects known as `Ramanujan Complexes’, which simply put, are higher dimensional avatars of Ramanujan graphs. And here, instead of thinking of the infinite tree as your ideal object as before, you now think of a corresponding two-dimensional, or a d-dimensional ideal object. This object comes to us from Number Theory, and is called a “Bruhat-Tits Building” in honour of Francois Bruhat and Jacques Tits. As far as the finiteness analogy is concerned, think of it as a suitably chosen finite quotient of the corresponding infinite object, and which also serves as a good finite approximation to the infinite object. This is how high dimensional expanders come up.

Wonderful! There is a highly interesting idea in many of your works that seems to mysteriously connect the local and the global aspects of the constituent parts. We see it play out in PCPs, Expanders, and in other works as well. Please lead us through this exciting development.

ID: In a PCP one is trying to decide upon the potential correctness of a proof, which in itself is essentially a global statement, by looking at multiple local, and necessarily smaller pieces of the same. These small pieces of the proof that are observed and inferred from, constitute the local views. One does this exercise at various local areas of the proof, and thus ends up collecting many local views of a single global object. So, a PCP inherently has this local to global feel to it. Expanders, and even more so, high dimensional expanders both have strong local to global properties.

Hmm!

ID: There are two ways to think about this. One is that, viewed from the point of operational efficiency, this presents a highly efficient strategy. Instead of having to look at the entire global object, one can be satisfied looking at some set of randomly chosen smaller pieces of the same. And yet, one retains the surprising ability to make correct and insightful globally valid inferences. So, this is computationally both efficient and insightful.

`Ramanujan Complexes’, which simply put, are higher dimensional avatars of Ramanujan graphs

Right!

ID: The second viewpoint is more philosophical in nature, and gives us confidence in the conclusions that we draw as scientists. That is because, at best, all of us only have access to partial and local views of the world that we inhabit. Yet, we use those very local measurements or partial observations in order to make intelligent deductions that remarkably hold true in a much more global sense. So, even without our knowledge, we are always relying on a certain local to global feature operating out even in our mundane and daily activities.

a PCP inherently has this local to global feel to it.

Is this seen even in the area of `Property Testing’?

ID: Oh yes! This local to global feature is also at the heart of the field of Property Testing. Here too, one is looking at large global objects, say a graph like the internet; or maybe at a very big distribution representing samples in some Large Language Model (LLM). Again, whenever a big object needs to be sampled, it would help a great deal if we could only look at a much smaller set of local samples, and yet be able to draw conclusions that are not only informative, but based on which one can further prove that the inferences drawn too, are correct to a high probability. So efficient property testing algorithms too, are highly dependent on a useful connection between local and global properties.

Is there an insight that has emerged from the study of the kinship between PCPs and other areas of study that was surprising and unexpected?

ID: Yes! But before that let us just remind ourselves that PCPs in themselves are not interactive proofs. The prover writes down the proof, and the verifier checks it. So, there’s no back and forth interaction here between the prover and the verifier. Still, the simple fact that the verifier is not doing deterministic verification but actually employing randomness, lends considerable power to the PCP. I also talked earlier about how a paper by FGLSS discovered the connection between PCPs and hardness of approximation. They demonstrated how one can prove hardness of approximation for the clique using PCPs. All of this is explicitly available out there.

But the connection between the two, philosophically speaking, runs much deeper! It’s in fact `two-way’ in the following subtle sense: “Any PCP theorem can also be interpreted as a hardness of approximation result’’! In a PCP, one simply enumerates using the randomness chosen by the verifier to arrive at a long list of small computations. In case the proof itself is correct, all the computations in the list that was just generated are now guaranteed to successfully go through. But in case the proof itself is not correct, only a certain small and definite fraction of the computations can be successfully carried out. So, to paraphrase and drive home the point: There’s always a gap present in such an instance, and it’s always going to be a hardness of approximation result. More so, this connection is `syntactic’, in the sense that it is just really two different ways to think about what is essentially one.

That is a subtle insight! Is this insight also hinting at something deeper?

ID: Well, let me add that at some point in time it started to become clear that there is some kind of a standard paradigm available to prove strong hardness of approximation results. And the first component of this paradigm is the ‘label cover’. This is an NP-hardness result that encapsulates the PCP theorem, including very strong forms of the PCP theorem. The second component involves adding gadgets on top of the label cover, wherein the gadgets are invariably a variant of the Boolean hypercube. To analyze the composition of the gadgets with the label cover, we rely on some deep results from the analysis of Boolean functions.

Interesting!

ID: Here is also where Subhash Khot comes in. What Khot said was that if one had an even stronger label cover, then it would be possible to use even stronger results originating from a deeper analysis of Boolean functions. I think around that time he had been thinking of a theorem due to the legendary Fields Medal winning mathematician Jean Bourgain, and about which he was highly impressed. This is what I have heard from him.

Further, he wanted to apply this theorem due to Bourgain in a context involving the hardness of approximation. But he realised that he could do it only if he had a very special type of label cover. And this is how he started thinking about Unique Games, and eventually realized that perhaps it may even be NP-hard. This led him to make the famous Unique Games Conjecture (UGC), which has so far turned out to be incredibly influential. UGC helped him achieve something really important – it allowed him to separate the PCP part from the rest of the problem. In Khot’s approach, the PCP part is already well encapsulated inside a Unique Game, and which itself is a kind of a strong label cover. So now, one need not think about the underlying PCP at all. Instead, one can now completely shift all the attention towards the analysis of the relevant Boolean functions. I don’t know if he knew it ahead of time, but this separation turned out to be amazingly fruitful, driving forward both the areas of approximability, and analysis of Boolean functions.

PCPs had a big role to play in understanding the hardness of approximation

This approach due to Khot went on for a while. And then people started getting worried that they didn’t really know how to prove the hardness of unique games. And so they surmised that it could possibly even be false. This hunch led people to try to find algorithms for falsifying the Unique Games Conjecture.

But works originating since the past ten years or so by Dor Minzer and Muli Safra, and some of which I too have been a part of, have resulted in a variant of the Unique Games Conjecture called the 2-to-2 games theorem. I personally think that these works really boost the community’s confidence that the UGC is highly likely to be true, although a lot of questions still persist.

Amazing account! What is also becoming clear is how seemingly unconnected ideas are subtly and deeply interconnected. Which brings us to another rather mysterious link that we want you to elucidate for us. Why is there a kinship at all between error correcting codes (ECC) and probabilistically checkable proofs? Where do they meet or converge?

ID: Yes, I want to say two things here. Firstly, that the point of convergence is the work of Madhu Sudan. Secondly, PCPs and ECCs are two areas that directly benefit from low degree polynomial codes. He is a pioneering contributor to both these areas and is therefore a natural meeting point. It must be noted that error correcting codes are essentially about being resilient to errors; and about presenting information in a form that makes it robust so that even if errors are introduced, the desired information can still be recovered. And it is important to note here that in an essential sense, PCPs too are about resilience to errors.

In PCPs, one is trying to transform the given proof into a special form, a PCP form, so that even if people try to cheat by introducing errors in the proof, the errors can be miraculously found everywhere. And the fact that the verifier must be able to notice the error even if he chooses to read only a small, randomly chosen part of the proof, means that the errors must appear in many places. If they didn’t, the verifier wouldn’t be able to notice it. So intrinsic to the very notion of a PCP is an inbuilt resilience to errors, just as in an error correcting code. And it must be mentioned that we employ a lot of error correcting codes in the construction of PCPs. To wit, error correcting codes are all over the place in PCPs!

Terrific! This is a question about one of your recent joint works that is scheduled for publication in an upcoming issue of the Annals of Mathematics, and based upon the idea of locally testable codes. To set the context, I quote the opinion of two eminent experts. Harvard’s Madhu Sudan says that this work is something that had always seemed to be implausible, but which has now suddenly become doable. Cambridge University’s Tom Gur refers to it as the field’s long awaited Holy Grail! Could you kindly lead us through the details of this breakthrough and highlight its applications, while elucidating challenges that had stalled progress for many decades.

ID: This work belongs to a collection of works that study local to global aspects of high-dimensional expanders. And there has been a persistent sentiment that these objects, i.e., high-dimensional expanders, should help us construct new error-correcting codes that possess useful properties like local testability. And at the same time, it should be noted that they’re different from the low-degree polynomial error correcting codes that were used in earlier constructions of PCPs, and additionally offered local testability too! Popularly known as Reed–Muller codes, these low-degree polynomial codes had a key limitation. Alas, they are not constant rate codes!

And as I mentioned earlier, there was a feeling that low-degree polynomials enjoyed so many great properties that it was not clear which of them were operationally and structurally crucial, and which of them simply happened to be there. Incidentally, there are other contenders too, such as Tensor Codes.

But speaking of our own interests, there was a really big push to try and construct codes with the desired qualities while employing ideas and tools from the area of high-dimensional expanders. In this work, we were working with a group of mathematicians that are really pure mathematicians.

With Alexander Lubotzky?

ID: Yes! I joined forces with Alexander Lubotzky, Shahar Mozes, Ron Livne and Shai Evra. And the five of us went about trying to construct codes on Ramanujan complexes. We believed that Ramanujan complexes were optimal in many ways, and therefore should be our way forward. But we just weren’t able to push it through. And then we had an idea to proceed in a slightly different direction. This change eventually resulted in a much simpler, easily describable, and I must admit, a very cute and elegant construction at the end. At least that is how I feel. Well, perhaps I shouldn’t be gushing about it all by myself!

Ha ha ha! Not at all. In fact, this is exactly why we wanted to hear about what transpired during its creation straight from one of the key co-creators of this stunning advance. I mean, there’s an incredible amount of excitement everywhere about this work.

ID: I’m very happy about this work, really! It now serves as a concrete proof of concept, and as a demonstration that the codes that we sought to build using novel insights emerging from the topic of high dimensional expansion, are now tangibly available with us. Remarkably, one can take the ideas involved even further, and extract more novel things out of them. Since the announcement of our work, there have been certain nice results – such as building PCPs from high dimensional expanders, and so on. So prospects for the concept’s future appear promising.

Incredible! In fact, it became even more intriguing when we heard that researchers working in a completely different area had also succeeded in discovering ideas closely related to your own team’s breakthroughs, and also around the same time as well. This parallel development apparently happened in the pursuit of a long-sought after goal – construction of a particular kind of error correcting codes for quantum computers, called Quantum LDPC Codes. Please lead us through this very rare coincidence.

ID: Oh yes! That was another wholly unexpected and amazing turn of events. Parallel to our own work, and with all of us remaining totally oblivious of developments there, a couple of researchers working in Russia by the names of Pavel Panteleev and Gleb Kalachev,13 has made an astonishing advance. Quantum error correcting codes with desirable scaling properties have typically been hard to construct despite considerable efforts.

In a very rare and beautiful scientific coincidence, just after we wrapped up our work on the construction of locally testable codes, it turned out that Pantaleev and Kalachev had also arrived at almost exactly the same construction. Further, their work too was based on almost exactly the same set of ideas. To top it, they had achieved something more than what we ourselves had been able to. They had shown that the construction additionally enjoyed a really special feature.

This is getting very interesting!

ID: Let me elaborate things a little bit. The construction can be thought of as a chain of linear maps. One linear map followed by another linear map. Here, we had analyzed this chain only in one direction, say going from left to right. Since it is essentially a chain, they went ahead and analyzed it by looking at it in two directions, both from left to right and as linear maps. After having looked at it in both directions, what they really came up with was a long-awaited breakthrough: a quantum code that possessed both constant rate, and constant relative quantum distance. This had been an unresolved open question for a long while till that moment, and obviously was a very exciting breakthrough for the quantum error correcting codes community. It was just an incredible coincidence that we both had these closely allied foundational developments simultaneously.

It was incredible coincidence that we both had these closely allied foundational developments simultaneously

Your collaborator Tali Kaufman in her invited address at the International Congress of Mathematicians (ICM) in 202214 makes a strong case for higher dimensional expanders. What really happens in higher dimensions vis-a-vis expanders, and further, why is going higher inevitable as per the title of her address?

ID: Tali Kaufman is really the pioneer in this area together with Alex Lubotzky. I joined them only much later. Regarding your specific query based on the title of her ICM 2022 address, well I really do not know about its inevitability. But generally speaking, higher dimensional expansion gives us much stronger local to global behavior. Somehow in dimension one, there is not enough rigidity. But in higher dimensions, there’s a lot of rigidity. And as a consequence, this amounts to saying that if a property is satisfied in some local region, then there is an agency which compels the system to satisfy the same everywhere, that is globally! This is simultaneously both useful and insightful. It gives one lots of interesting options, thanks to this connection between the local and the global.

RL=L and BPP=P are both open problems inquiring whether randomness could save space or time in computation. These questions also strengthen the idea of viewing randomness as a computational resource. Wigderson’s works that considered randomness as a computational resource lead to the study of combinatorial objects such as expander graphs. Even Reingold’s SL=L result using expanders was a step towards resolving whether RL=L? However, while studying expanders, your ideas diverged and you instead began to explore randomness as a resource for robustness. This departure is seen to be a common thread across your works ranging from differential privacy, to robust PCPs. What inspired you to think differently here?

ID: I guess I was interested in varied applications, and so that’s where I took inspiration from for these objects. For both threads though, expanders provide a kind of robust version of connectivity. For PCPs, this robustness can be useful for creating robust proofs, namely Robust PCPs. For derandomization, this robustness can be used to replace truly random bits by pseudorandom ones.

You were awarded the Paris Kanellakis Award in 2021. We would like to know how you felt on receiving that award, and what it meant to you.

ID: It’s an award that recognises work which manages to combine both theory and practice. And I received this award jointly with five other esteemed colleagues for developing some parts of an area of privacy known as differential privacy. This was an early work of mine from my postdoctoral days done with Kobbi Nissim. Kobbi and I worked on this while we were both in Princeton, and we never really imagined where it would lead us to. It just seemed very interesting at the time, and we had a lot of fun working together. When the paper was finally written up, and it was time to give talks on the subject, I was comfortable to hide behind Kobbi. This was a paper that tried to define privacy but didn’t yet fully succeed. We proposed some definitions and showed how some setups were certain to fail. It really caused people to think deeply on these aspects, and often resulted in objections raised during talks. Kobbi loved it, but for me it was a bit too controversial…. Indeed, he stayed on in the area, and went on to write the paper that defines differential privacy with other colleagues. I was just very happy to have played my part in this. We were thinking about these things when we were still postdocs, explored different solutions and convinced ourselves that they can easily be broken. We analyzed the amount of noise necessary for privacy to be preserved. And since I like to prove hardness and impossibility results, our work was essentially an impossibility result. But it still served as a positive starting point for a follow-up work that defined what privacy is. The ability to now clearly define what privacy is, has been a positive outcome of our research.

That is great! Finally, we note that you have visited India on many occasions. Would you like to share with us your impressions of India?

ID: I have been to India several times, the last one being sometime in 2022 or so. I wasn’t able to come to the 2025 workshop in ICTS-TIFR on High Dimensional Expanders despite having co-organized it. I had earlier given a plenary talk at ICM in Hyderabad back in 2010, and have had visits to meet my friend and colleague Prahladh Harsha in TIFR Mumbai. I have fond memories of India.

Thank you so much. This conversation has been immensely insightful for all of us here. With a deep sense of gratitude, we thank you again for your valuable time and attention. \blacksquare

Footnotes

- “Probabilistic encryption and how to play mental poker keeping secret all partial information”. STOC ’82: Proceedings of the fourteenth annual ACM Symposium on Theory of Computing, 1982, pp 365-377, https://doi.org/10.1145/800070.802212. ↩

- “Probabilistic checking of proofs: A new characterization of NP”. Proceedings of the 33rd IEEE Symposium on Foundations of Computer Science. IEEE, New York, pp. 2-12. 1992. ↩

- “Proof verification and intractability of approximation problems”. In Proceedings of the 33rd IEEE Symposium on Foundations of Computer Science. IEEE, New York, pp. 13-22. 1992. ↩

- Uriel Feige, Shafi Goldwasser, Laszlo Lovász, Shmuel Safra, Mario Szegedy: “Interactive proofs and the hardness of approximating cliques” Journal of the ACM (JACM), Vol 43, Issue 2 pp 268–292, https://doi.org/10.1145/226643.226652. ↩

- Sanjeev Arora, Carsten Lund, Rajeev Motwani, Madhu Sudan, Mario Szegedy: “Proof verification and the hardness of approximation problems”, Journal of the ACM (JACM), Vol 45, Issue 3 pp 501-555, 1998, https://doi.org/10.1145/278298.278306. ↩

- The complexity class L consists of decision problems solvable by a deterministic Turing machine with logarithmic space or memory (in the input size). RL and NL are its randomized and non-deterministic counterparts, respectively. By definition, L \subseteq RL \subseteq NL. RL=L is a widely believed conjecture and a longstanding open problem. ↩

- SL consists of decision problems solvable by symmetric non-deterministic Turing machines using logarithmic space. L \subseteq SL \subseteq RL \subseteq NL. Reachability in undirected and directed graphs are known to be SL-complete and NL-complete problems, respectively. Reingold’s result SL=L in 2005 was a key breakthrough, and subsequent work by Reingold, Trevisan and Vadhan (https://dl.acm.org/doi/10.1145/1132516.1132583) in 2006 offered an approach to the resolution of RL=L. ↩

- “Entropy waves, the zig-zag graph product, and new constant-degree expanders”, Volume 155 (2002), Issue 1, https://doi.org/10.2307/3062153. ↩

- “The PCP theorem by gap amplification”, JACM, Volume 54 (2007), Issue 3, https://doi.org/10.1145/1236457.1236459. ↩

- https://doi.org/10.4007/annals.2005.162.439. ↩

- https://doi.org/10.1145/509907.5099. ↩

- Johan Håstad “Clique is hard to approximate within n^{1− \varepsilon}” Acta Mathematica, 182(1): 105-142 (1999). https://doi.org/10.1007/BF02392825↩

- Pavel Panteleev, Gleb Kalachev “Asymptotically good Quantum and locally testable classical LDPC codes” STOC 2022: Proceedings of the 54th Annual ACM SIGACT Symposium on Theory of Computing, Pages 375–388 https://doi.org/10.1145/3519935.3520017↩

- https://ems.press/content/book-chapter-files/33317. ↩