Entering the portals of the illustrious IIT Kanpur as a fresh BTech student in 1982, in the backdrop of a mostly introverted school years in erstwhile Allahabad (now Prayagraj), Manindra Agrawal has always found great joy in engaging with challenging mathematics problems. From emerging on the international scene by fashioning a deterministic polynomial-time algorithm for primality testing to rising to the chair of the Directorate of IIT Kanpur today, Manindra’s academic trajectory has been everything from exciting to fulfilling. The two hours we spent conversing with him, on the evening of the day that marked exactly one year in office as Director, was a refreshing variance from his crisp and quick email responses, and a marked economy of words in communication. As we listened to his accomplished academic journey, the story recounted below captures some of those signal moments, and testifies to all that he shared with an openness of mind.

Thank you for talking to Bhāvanā. We will start with your most well-known work on the AKS (Agrawal–Kayal–Saxena) primality test. How did it all start, and how long were you thinking about the problem?

MA: It started in 1998. Somenath Biswas and I had read a new paper1 that came out around then. It had used a very interesting approach to solve an important problem called Identity Testing. We wondered if it could be applied to other problems as well. And the problem we decided to pick is primality testing—that is how it started. It wasn’t really that I wanted to work on primality testing for a long time. What we had was an approach, and we were looking for a problem to apply the approach to. We only happened to find primality testing to apply it on… As it turned out, that approach did not work, but then we stayed with the problem. We found another approach to solve the problem, which we published in 1999. It was still a probabilistic algorithm, but a different approach. I then felt that this new approach had a lot of potential and that I could probably reduce or remove the randomization part of it. I kept working on it for almost one year. I had a student, Rajat Bhattacharjee, for a BTech project, and I got him to do some experiments. I had a hypothesis, and that experiment tested that hypothesis up to some large number, which turned out to be correct. That was 2001. I then had two more students from the subsequent batch, Nitin Saxena and Neeraj Kayal, doing their BTech project. I asked them to continue with the experiment for even larger numbers, which they did in the first six months, and verified the hypothesis for those larger numbers too. Finally, somewhere around December, I said, “We have done enough experimentation, let us try to prove it’’. We were very convinced that the hypothesis was true. So, that is what we worked on until April, when they wrote their BTech thesis on this. Then they were to go for a PhD. Neeraj had applied to TIFR, Mumbai, and Nitin had applied to a few US-based universities. By April, it became clear that none of the universities Nitin had applied to accepted him. So Nitin decided to join me for a PhD. Neeraj, on seeing that Nitin was joining, decided that he would not go to TIFR, but would join with me as well. Then I said, “You guys are around, so let us continue working in the summers’’, and that’s when we finally solved it. By July 2002, we had the solution.

Can you please elaborate on the initial approach, if it is not too technical?

MA: The approach in the original paper addresses a problem called polynomial identity testing. Suppose you are given a multivariate polynomial in the form of an arithmetic circuit—an arithmetic circuit is a sequence of additions and multiplications. You start with variables x_1 to x_n and then you apply a sequence of operations on these variables—additions and multiplications in some order. Every intermediate step gives you a polynomial. So, you keep repeating this operation, and at the end of it, you will again end up with a final polynomial, the output, whatever it is. The question that is posed is: Is the final polynomial the zero polynomial? That is the identity test. And it has a very simple randomized algorithm for it, which is to just put random integer values for x_1 to x_n and evaluate. If you see a 0 at the end, declare it is a zero polynomial. If you see a non-zero, declare that it is a non-zero polynomial. If you see a non-zero value, the polynomial is definitely non-zero. If you see a 0, the polynomial may still be non-zero, but the probability of that is very small. So, that is it, very simple. Now, if you want to reduce the error, all you need to do is the following: when you are substituting values for x_1 to x_n, you pick a random value from a chosen range of integers. As you increase that range, the probability of making an error goes down. However, the number of random bits you use goes up, because if the range is higher for a variable and you need to pick a random value in this range, you will need a higher number of random bits. If you want to pick a random number from 1024 numbers in the range, you need 10 random bits. You toss a coin 10 times, you get a number, you pick and use that index. If your range is 2048, then you need 11 bits. So, the higher the range, the more the randomness. So, there is a trade-off: if you want to reduce the error, then the randomness goes up.

What this paper did was very interesting. They said, do not pick integer values, pick irrational numbers instead. They chose certain irrational numbers for each of the variables. They said, plug those irrational numbers. It did not need any range. They could actually fix just one irrational number for each variable, say \sqrt{2} for x_1, \sqrt{3} for x_2, and so on. They proved that if you get a 0, then the polynomial has to be zero—it follows from essentially the degree argument that given a polynomial of a certain degree, if you plug in an irrational algebraic number of sufficiently high degree,2 then it won’t result in a 0. For example, let us take \sqrt{2}. A polynomial of degree 1, in one variable, can never be 0 on \sqrt{2}, while a polynomial of degree 2 may be 0. On the other hand, if you have the irrational number \sqrt{2}+\sqrt{3}, then no polynomial of degree 2 will be 0 on this number. Actually, you need a polynomial of degree 4 if you want 0. So, that is it, essentially—if you have been given an arithmetic circuit, you can compute the degree, because you know how many multiplications there are. Once you know the degree, you pick out an irrational number of appropriately high enough degree, then it can never be 0 on this polynomial unless the polynomial itself is 0.

All of this is nice to know, but then, when you actually want to implement this test, you cannot work with irrational numbers. You have to approximate them with rational numbers. Whenever you approximate, you have to choose a certain precision of approximation: 10 decimal digits, 20 decimal digits, and so on. What they showed was that the higher the precision of the approximation, the lower the probability of error. Let me explain this roughly, just to make the point, even if it is not completely correct. Let’s say you choose a precision and then you create a set of numbers, let us say 10 numbers, such as 1.110, 1.111, 1.112, 1.113, and so on, up to 1.119. You have 10 numbers with the same precision. So, pick one of these 10 numbers randomly and substitute it, and do it for every variable. So, here the randomness is that for every variable you have to pick one of the 10 numbers. So, it is a 4-bit randomness. Then, you get a certain probability of error: errors do come in because it is an approximation. What they showed was that, as you increase the precision, 2-digit, 3-digit, 4-digit, and so on, while keeping random bits the same, your probability of error goes down. The more precision you have, the lower the error. So, it is a very interesting test, and the trade-off is interesting. The usual trade-off is the amount of randomization versus error. Here, the trade-off is the amount of precision versus error. Randomization remains the same, fixed.

So, this is the approach we thought we would use for primality testing, but it did not work. We then discovered a very different approach, which eventually gave the same trade-off. That amount of precision was captured by the following. First, we translated the polynomial testing problem to polynomial identity testing. How do we do that? Well, a number n is prime, if and only if, (1+x)^n \cong 1+x^n(\mod{n}). That is a very standard identity. Verifying if (1+x)^n \cong 1+x^n (\mod{n}) is a polynomial identity. So, this is the identity you need to verify.

But, if you go about verifying this identity, it takes forever, because you have to open up latex^n[/latex] and it will have latex[/latex] terms. So, the time you take will be something like the order of n, which is very large. What you want for primality testing is that your test needs to be a polynomial in \log (n). Verifying this identity is too expensive. What you do instead is, you pick a random small-degree polynomial in x and go modulo that polynomial also to verify the identity. When you go modulo a low-degree polynomial, then you can open up; keep reducing the degree, it is very efficient, and you can verify. But, then there are errors, because it will work for most choices of random polynomials, but not for all. So, what we showed was that you can choose these moduli polynomials in a way that the set of polynomials from which you pick one at random has a fixed size, essentially. But if you increase the degree of these polynomials in the set, the error goes down. So, in that sense of precision, if you increase the degree of the polynomial you choose, your error will go down. We also showed that random bits will remain fixed in the process. I had this intuition that we can shrink the set of polynomials we were working with to a very tiny set, that is of size polynomial in \log (n). Then, we can try out each one, one by one. That is what we eventually proved.

I had this intuition that we can shrink the set of polynomials to a very tiny set

When did you start becoming interested in mathematics and computer science?

MA: Computer science, of course, did not even exist when I was studying in school. I always enjoyed doing mathematics, because that is the only exact subject I found; everything else, all other subjects, were inexact, including physics. So, they never interested me; in maths, everything is black and white, that is what I really liked and enjoyed doing. I ended up doing computer science simply because I had a high JEE rank, so everybody said you should take computer science, and so I took it. See, initially, I was very fascinated to have come to Kanpur, which had a very good mainframe DEC-1090 at the time. I was very fascinated with programming, a very normal thing, but also exact, so it really appealed to me. Initially, I did a lot of programming, at some point got bored of it, and then got interested in theoretical computer science, which is essentially maths, understanding computing, algorithms, and so on, and then continued with that.

We would like to know a bit about your upbringing and family, and how it influenced your trajectory.

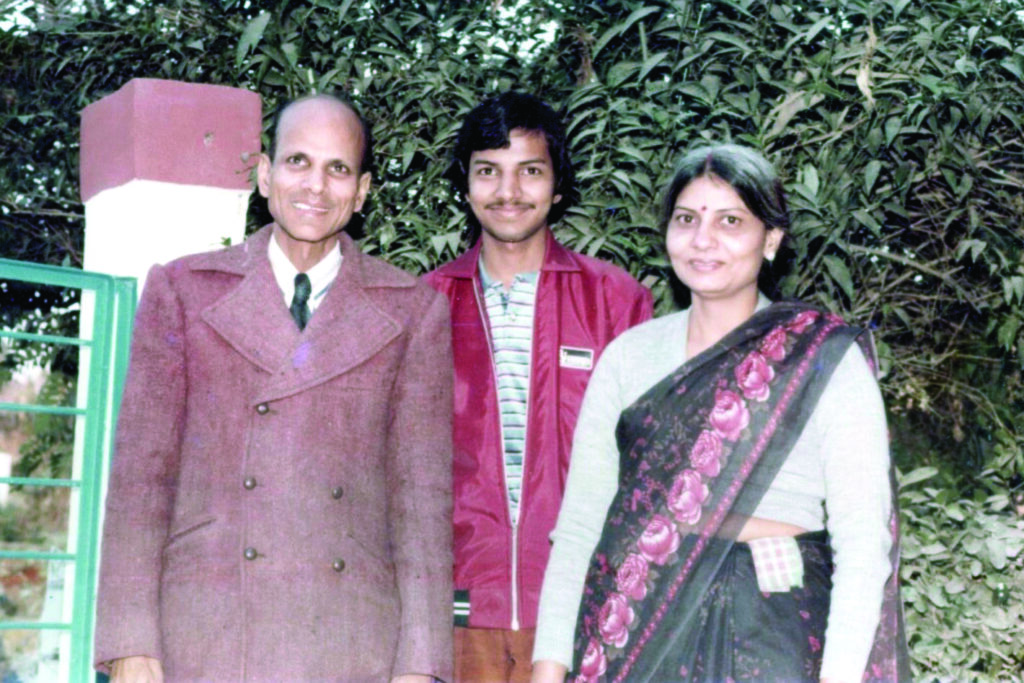

MA: My grandparents from my father’s side were gone before I was born. So, when I grew up, I had my mother, my father, and my elder brother—just the four of us. Both my parents were teachers—my father was a teacher in mathematics, while my mother was in education. There was, in that sense, an academic environment, not a research kind, as they were teachers in degree colleges. So we generally did tend to discuss more academic things than otherwise. I would say that I was good at studies from the beginning, and I realized that I could do well without studying. My modus operandi was that I would start studying a few days before the exam. I did not have a special interest in anything, except for reading. I would read a lot of magazines and novels. I would say that this part came easily to me, so it was natural for me to follow the academic direction. I would not say I was influenced by anybody; it is just that it seemed natural to me, because it was clear that this is what I was good at.

Any particular books that you liked at that time?

MA: Nothing in particular, I didn’t read any deep philosophy, or any fancy book. It was just simple, little books. Comic books like Champak, Enid Blyton, etc. Mainly light reading.

Once you realized that you were interested in academics, did you talk to your father or teachers about it?

MA: No, I was exceptionally introverted. I do not think I ever talked to my father about mathematics.

Did it make it easier or harder?

MA: I don’t think it made it harder; I was just happy doing [my mathematics]. There was only one problem I could not solve at that time, and it was because it couldn’t be solved, which is to integrate e^{-x^2}.3 I spent a lot of time trying to integrate it.

Did you ask someone about it later?

MA: No, I did not ask anybody, but when I came to IIT, I found it in the first year itself, in a book.

You did your schooling and intermediate in Allahabad. Any impressions from that time?

MA: Yes, I studied at the Government Inter College, Allahabad, for my 11th and 12th. Like I said, studies came naturally to me, but I had no idea of how good or bad I was. Absolutely no idea. I could only calibrate myself with my schoolmates. So, there, I was good, yeah, sure, but overall, I had no idea of how good or bad I was. In fact, it was my brother who suggested that I write JEE. At that time, there were not many JEE exam centres in Allahabad, just one big centre. So, when I went to give the JEE exam, some of my friends, who were plugged into the JEE atmosphere, were pointing out: “That guy is from St. Joseph’s, he got top 100’’, and after the exam, “oh this guy is getting 90 in maths, etc.,’’ Lots of things like that. I thought, “I am gone, I don’t think I am going to get more than 60 in maths.’’ I now think somebody graded me wrongly or something like that. [laughs]

So, you didn’t undergo any special training for JEE.

MA: I did. There used to be this Agarwal Classes, who sent lessons by post. It was great fun for me. The problems in other books were exceptionally boring. But Agarwal Classes would come up with very interesting, creative problems. I really enjoyed solving those problems.

Coming to your time at IIT as a student, can you say something about your friends from back then?

MA: Yeah, I made great friends here. I was used to the four of us staying in a house. Here you come to hostels, and for the first time in your life, you are free, with no parental oversight. And students from all over the country, all in the same group. So, I made great friendships that have lasted till today. The BTech years were spent essentially in hanging out with friends and doing certain things with them. Some good, some bad, some ugly things. So, almost no studies. I went to classes regularly in the first year, but after that, I stopped doing that as well.

So, it looks like, from being an introvert, you come here …

MA: Yeah, I was still an introvert. These days I can speak, as I am doing now. I can articulate my thoughts and speak for an extended period of time. I was not at all like this at that time. This is an acquired ability for me, not natural at all. I still get tongue-tied if it is anything out of my domain of expertise. Sometimes, I am asked to give a speech on, say, the status of pollution in Kanpur city. I do not know what to say, except for a few sentences.

Outside your comfort zone.

MA: Yes, outside my comfort zone… But, there were also a lot of extroverted kids. So, it was a great group, you know, there were some introverts, some extroverts, all having fun together. I used to, you know, solve assignments for my friends. So, I was popular from that perspective, though I was an introvert.

Any new skills that you acquired here during those days?

MA: Well, I do not think any of the new things that I acquired were good skills. They were all bad skills [laughs]. Using colourful language, drinking alcohol….

But you enjoyed all of this.

MA: Completely.

You said you made a lot of friends, many of them must be working in very different fields. Can you say a few words about the diversity of professions among your friends?

MA: Yeah, absolutely. There are a few IAS officers, a few IPS officers, one or two forest officers, industrialists, and some in the corporate sector. Those who have started their company, who have been in a particular company, and grown with that. A lot of them migrated to the US and did their start-ups, some went into the hardcore tech domain, Silicon Valley, so a whole variety of [professions]. A bunch went into academics as well.

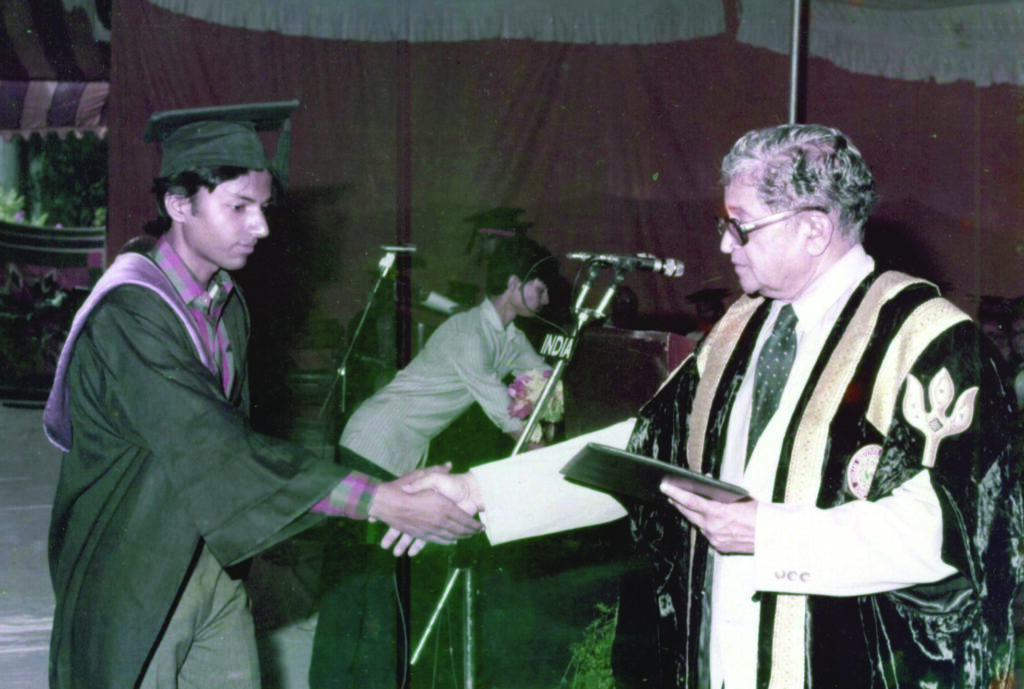

You said you chose CS because you could get into CS. What motivated you to study it further?

MA: Like I mentioned, I started with programming, and then I really enjoyed theoretical computer science. In the summer of the third year, I went to Pune for an internship with a company. I wrote a very long piece of software for them. I enjoyed writing it. I single-handedly wrote a massive amount of code and made it work. Once I came back, I asked myself, “Do I want to do this for the rest of my life?’’ The answer was clearly a no. Once that got ruled out, since I had no interest in either administrative services or an MBA, the only option left for me was to study further. Theoretical computer science was the thing that I had some knowledge about, and I enjoyed doing. So, I decided to do a PhD in this.

What was the company in Pune, and what kind of software did you write for them?

MA: There is a company called Kale Consultants, not sure if it still exists. The software was basically creating a database using B-trees and providing all the relevant functionality.

Did you consider other places as options for your PhD, apart from IIT Kanpur? How did you choose your supervisor?

MA: Yeah, I did consider. A lot of my friends and classmates were studying for the GRE to go abroad. So, I also decided one day to take a look at it myself, and looked at some of the books. And one thing I was told was you can sort of crack other things, but English is a big challenge. In the GRE, there is a verbal reasoning section where they ask about very difficult words. So, you have to really mug up the English dictionary. I started doing it. Five minutes later, I said I was not going to do this. I don’t care if I go outside or not, but I am not going to waste my time learning these words. Then I said: Okay, Kanpur is a place I like. Somenath Biswas was a theoretical computer scientist [I liked]. So, I went and told him I want to do a PhD with you, and he said, “That is fine.’’

So, it seems like clarity emerged from knowing what not to do…

MA: Yeah, it was a process of elimination.

What was your PhD thesis about?

MA: Complexity theory. Basically, studying the properties of NP-complete sets in particular. And we came up with some new properties.

After your PhD you went to SPIC Science Foundation, today’s CMI, for a postdoc. How was your experience there?

MA: Yeah, it was good fun. A whole bunch of us were together then. Madhavan [Mukund], Milind Sohoni, Meena Mahajan …. They all did their bachelors at IIT Bombay, and we are all 1986 grads. While Madhavan and Milind joined SPIC Science Foundation, Meena joined Matscience (The Institute of Mathematical Sciences). Then, after a year or two, Milind went to IIT Bombay. After about three years there, I went to Ulm in Germany for a postdoc. I spent a year there. And from there, I applied to IIT Kanpur.

What kind of problems did you work on in Germany?

MA: I think it was still complexity theory. In that decade, the 90s, the central theme of my work was the isomorphism conjecture. Usually, given two sets, you say there is an isomorphism between them if you can establish a one-to-one onto map between them. But in complexity theory, in addition, the condition that is imposed is that this map must be polynomial-time computable in both directions. That is known as a p-isomorphism, or a polynomial-time isomorphism. And you ask the question about which sets are isomorphic to each other. Of particular interest is this class of NP-complete sets, i.e., in terms of polynomial-time reduction to each other. It was shown in the 70s itself that a large number of naturally occurring NP-complete sets are, in fact, isomorphic to each other. So, there was a very famous conjecture saying that all NP-complete sets are isomorphic to each other. Initially, it was believed to be true, but then some results came that implied it is not true. It was at that stage when I started working on it. What I did was I looked at a stronger notion of NP-completeness. A set is said to be NP-complete if everything in NP reduces to that set and the reduction is a polynomial-time reduction. The stronger notion I looked at is when you also ask that the reduction is a first-order reduction. A reduction is said to be a first-order reduction if it can be computed by a constant-depth circuit, meaning the following. Any reduction can be written or expressed as a circuit, i.e., ANDs, ORs, and NOTs. You take an input, you operate on it, and you get an output. You now want it to have a constant depth, meaning the number of layers is constant and therefore of polynomial size. So, such reductions are called first-order reductions or \mathrm{AC}^0 reductions. This is certainly a subclass of polynomial-time reductions. A polynomial time reduction can be viewed as arbitrary polynomial-sized circuits, with no restriction on depth. So constant depth is much, much more restricted. So, this was a question that had been posed earlier also. What I could show eventually, by the end of that decade, was that all NP-complete sets under such reductions are isomorphic to each other under the same type of reductions. That is, the isomorphisms are also computed by \mathrm{AC}^0 circuits or constant-depth circuits, both ways. So, this proved the isomorphism conjecture in that setting of constant-depth circuits. That was a very interesting result, I thought, and it took a long time to prove.

Can you give a few examples?

MA: The set of all graphs (up to isomorphism) is not NP-complete, but the set of all 3-colourable graphs is, the set of all graphs with a Hamiltonian cycle—which means a cycle which covers all the vertices—that set of graphs is NP-complete, the set of all satisfiable Boolean formulas is NP-complete. There is a whole bunch of problems that are NP-complete, and they come from different domains. The interesting thing is that they are all isomorphic to each other.

What motivated your decision to come back to IIT Kanpur?

MA: I like this place a lot, having done my BTech and PhD here.

So, around the same time, you also received two awards, the Young Engineer Award and the Young Scientist Award. Was it for your work on the isomorphism conjecture?

MA: No, no. The Young Scientist Award was awarded probably for all the work I had done until then, by the Uttar Pradesh Council for Science and Technology in 2000. The Young Engineer Award was actually for something different. In 1998, I worked for the Indian Navy and designed an encryption system for them, which they implemented and deployed. So, I got the Young Engineer Award for that work.

the only emotion I had after solving it was that of relief

Was it a theoretical work?

MA: It was not just theoretical. There was some theory involved—theory was used to design a new encryption algorithm—but then I also coded it. I have always been good at coding, though I never wanted to do it full time, but I enjoy coding, and I think I am pretty good at coding. So, I did all the coding and handed over the code to the Navy.

We will come back to what is perhaps the high point of your career, the AKS primality testing algorithm. So, you wrote that paper with two of your students, as you mentioned. We believe that the paper was solicited by the Annals of Mathematics. How did it feel to achieve this, especially with two undergraduate students?

MA: Frankly, the only emotion I had after solving it was that of relief: “Yeah, I am done with it. I have been working on it for four years. Now, I can finally get rid of it.’’ I had no idea at the time—of course, I knew it was an interesting result—that it would blow up like this, the way it did. I wrote down the paper and sent it to a few people who had been working on this problem. I just sent it to them, stating this may be of interest to you, and the next thing I know, I am getting calls from the New York Times, here, there, and everywhere, and there was a whole media circus around it.

This is interesting. Wasn’t a deterministic polynomial-time algorithm a very sought-after problem?

MA: Yeah, it was, definitely. I knew that it was, and that many people had tried before and failed. So this was a significant result. But there are a lot of significant results that have been proved that do not get into the media, so not everybody talks about it. We uploaded that paper to our server, and within a few hours, the server crashed because there were so many download requests from all over the world. So that was bizarre. I thought it would be of interest to maybe a hundred people at the most.

You have mentioned in one of your talks that this deterministic polynomial time algorithm is more of a mathematical interest and not really practical because of its speed…

MA: Yeah, because although it is polynomial time, it is still a lot slower than certain randomized algorithms that are used for primality testing, where you can make the probability of error as small as 1 in 10^{100}, and it still remains pretty fast. From a practical perspective, you can live with an error of 1 in 10^{100}, because when you run such a code on a computer, the probability that the computer itself malfunctions is more than 1 in 10^{100}. So, why would you bother about such an error?

Was that perhaps why you didn’t think it would blow up as much as it did?

MA: Yeah, right, in the practical domain, it does not have much [of a significance]. So, it is only the theoreticians who may be interested.

This resulted in a year-long stay at the IAS in Princeton. How was your experience there?

MA: I would say it was an enjoyable stay. There was a lot of time to do things, and the best thing at the IAS is the forest. I would get into the forest every morning and come back by lunchtime to have lunch. So, it was very good. The only other person I worked with over there, or talked to, was Avi Wigderson. We discussed a few problems that did not eventually lead anywhere, but we exchanged some ideas. I, of course, met several mathematicians there: Pierre Deligne, Andrew Wiles, Edward Witten, and others. But they were doing things which do not intersect with me, so we would just exchange pleasantries.

within a few hours, the server crashed because there were so many download requests from all over the world

Did you also know then that Harish-Chandra was a permanent faculty member there?

MA: No, I didn’t know earlier. I came to know of this only when I was in Princeton. See, I am not a historian kind of person. I don’t take an interest in the history of any subject. In fact, even for research, my approach is, I would say, slightly unorthodox. If I have to do research on something, I don’t start by reading everything about it. I just start working on it. If I get stuck, I go somewhere and look it up, and try to read what is known about it. So, it sort of moves in that sense. Generally, many people first spend a long time reading everything on a topic and then start working on it. It has its pros and cons. My approach has worked all right for me so far. So, the point I was trying to make is that I don’t know much of maths either. The amount of maths I know is only slightly more than what I have used.

You have received several accolades, especially for this work, but not only for this. Some of them must hold a special place. Any thoughts?

MA: Awards make you feel good for some time. Apart from that, it does not have any other value for me. If there is money coming in, then money has a monetary value. It makes life slightly easier. But otherwise, I do not think I have been impacted by any award. I do not feel particularly happy about any award. Generally, I have a life philosophy of looking to the future. What is past is gone. So, awards are always for the past. In that sense, their value for me is not very high.

The amount of maths I know is only slightly more than what I have used

You were also at the National University of Singapore (NUS) for a year. How did this come about?

MA: Oh, it came about very simply. I had two years of leave. Thyagu [P.S. Thiagarajan] was there in the US when I was at Princeton. So, my first year in Princeton was funded by the Clay Mathematics Institute, and they were giving me a good amount of money. For the second year, Avi said, “No, no, you should stay, we will fund you’’. I said, “Okay. How much money are you going to give me?’’ It was like half of what the Clay Foundation was giving me. I said, “Look, I cannot survive. I have two young daughters. I am paying through my nose for their education in the US.’’ So, I said, “No, it won’t work. Let me just move on.’’ I happened to talk to Thyagu. He said, “Why don’t you come to NUS? We will pay you more than what the Clay Foundation pays”. I thought “Okay, sure. I will get a chance to work with Thyagu.’’ I really enjoyed working there. We actually wrote several papers, on a completely different domain, on something that he was working on: Hybrid Automata.

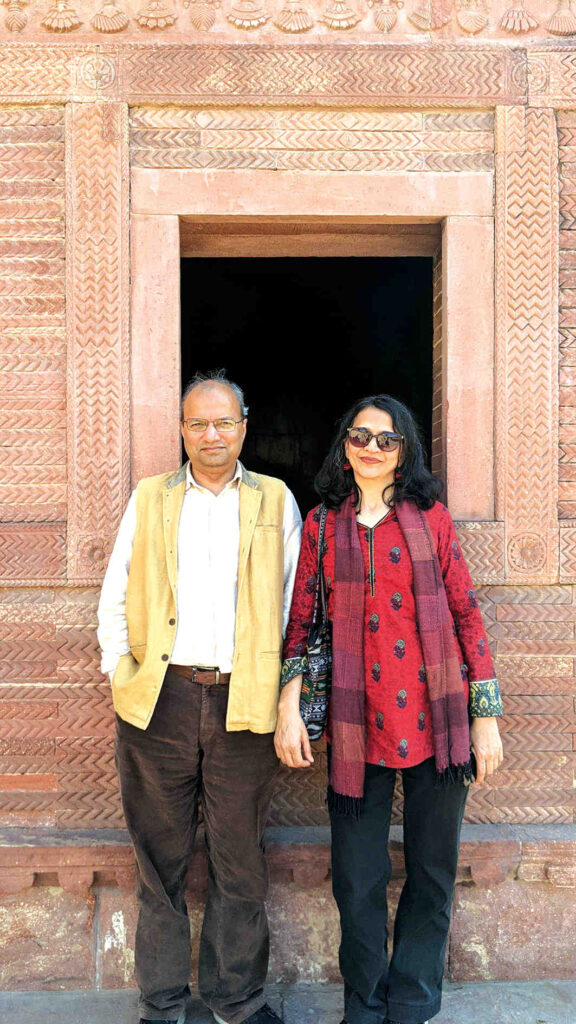

This is a good time to segue into your family. When did you get married? Did your wife accompany you on all your stints outside of Kanpur? What does she currently do?

MA: Rachna and I got married in 1991, immediately after I submitted my PhD thesis. She has been with me for all extended stints outside Kanpur since then. She started as a software engineer and worked in Chennai and Singapore when we were there. Then she worked for many years in automation projects at IIT Kanpur. Recently, she has switched to managing projects with a faculty member at the institute.

You said you have two daughters. What are they currently doing? Are they in academics?

MA: Yes, I have two daughters, and neither of them is in academics. They are not interested. The elder one did her architecture degree from the [School of Planning and Architecture], Delhi, and then she did a Masters in Design and Technology from Parsons School of Design, New York. Currently, she is working. The younger one did her graduation in mathematics from IISER, Mohali. Then, she did a Masters in Computer Science from the University of Chicago, and currently she is working in Chicago.

So, both Neeraj and Nitin went on to do their PhD with you, and now Nitin is also your colleague here. In your experience, do you enjoy working with collaborators more or working alone?

MA: I think if the collaborator is good, I enjoy working with them. In that sense, I have enjoyed working with Nitin. He is a good collaborator. But, I have not had much time to work with him in the past many years. But, otherwise, I work mostly alone, and I enjoy that as well. My thumb rule is that—I have more than a few thumb rules—if it is my PhD students, I let them be. If you pick up a problem and you have an issue, come and discuss it with me. Otherwise, you work on it. Do not bother me. So, fortunately, I have got very good independent PhD students who have pretty much written their own theses. I do not even have joint papers with most of them.

Except with Neeraj and Nitin, of course…

MA: But even with them, after their PhD thesis, I do not have any work with them. They did their own work. There are some problems, which are thought of as very hard to solve, that I am interested in. I realize that many people are not interested in working on these problems because they think it is a waste of time. I don’t mind wasting my time. I just enjoy thinking about the problem. That is what really makes me happy. Therefore, I end up working on it.

Can you give an example of such a problem?

MA: So, there is this polynomial identity testing problem. Like we have mentioned, there is a randomized polynomial time algorithm—several of them, in fact. But, can we de-randomize it? That is, can we have a deterministic polynomial-time algorithm for identity testing? Is it possible? It is a very fundamental problem. It is known that if you find a deterministic algorithm for it, then there are very strong consequences. There are certain lower bounds that you can get, which are not easy to get otherwise. It is a problem people have been working on for quite a while. It’s known to be a very hard problem, and we have not been able to solve it yet.

You have had several PhD students, and now they are all well-placed in various premier institutions in India. It seems to reflect your style of mentoring and training future scientists.

MA: No, it reflects the fact that these students were very good, because I had no role in their training. Like I said, I was just like “Do your work, don’t trouble me.’’ So, everything they have achieved is thanks to their own capabilities.

I just enjoy thinking about the problem. That is what really makes me happy

More recently, during the COVID pandemic, you and your team came up with a model to predict the spread of the pandemic. Can you tell us how this model came about? How did it all pan out in retrospect? And, also importantly, how did you face the bouquets and brickbats from an anxious community?

MA: It is a very interesting story. It is a bizarre story. In May 2020, DST [the Department of Science & Technology, India] constituted a committee to solicit COVID models from Indian groups and identify one that is most suitable for predicting the COVID trajectory in India and recommend it to the government. [Mathukumalli] Vidyasagar was the chairman of that committee, and I was put in as a member of that committee. There were 8 other members, 10 members in total. So, in May or June, we put out this call, and we got about 35 submissions. Half of the members of our committee were biologists. They did not have expertise with models. Eventually, it was a few of us who were statisticians and mathematicians who really looked at and analyzed the models. And, we shortlisted two. They had two very different philosophies. One was a mean-field model, that is, it looks at the entire population and sets up equations. There are some parameters governing those equations, so you estimate the parameter values and predict the trajectory. The second was an agent-based model. Every individual is modelled as an independent agent. Interactions between them are modelled, and then you scale them. While asking for submission, we had also asked everyone to do a simulation for one city and show the results. So, these two models were identified, and we told DST that these two are our recommendations. DST gave a positive reply, asked us to cross-check and make a proper recommendation. So, we got back in touch with these two groups and said, “Okay, you got one city, but we would like to see how your model works at the national level. So, can you do the simulation?’’ The agent-based group said that a simulation for an all-India level is impossible because that would mean one billion agents. The compute time for that simulation would be more than the real-time trajectory. By the time the simulation was done, COVID would have come and gone. They said they could at best do a city. So, this model was not suitable for what the government needed: a national-level model. The other candidate, the mean-field model, did a simulation for India. But it turned out that their prediction went horribly wrong. We realized this by early July. Therefore, we had none. But we had told DST that we had a model that we were going to propose. Vidyasagar and I felt that this was deeply embarrassing. Firstly, the entire Indian scientific community couldn’t put up a single model. And, secondly, we had said that we had got a model. But now, we would have to concede that we didn’t. We decided that we had to make an attempt ourselves and come up with a model. As suggested by Vidyasagar, we approached Lieutenant General Madhuri Kanitkar, who was a member of the committee. She was a doctor in the army. In fact, she was in the Chief of Defence Staff Office, leading the entire medical corps of all the services. So, she had a very good clinical understanding. So, the three of us worked on this and we developed a model.

What we found while developing the model was that the reason earlier models failed at a pan-India level was that every model has certain parameters. Now, the key thing needed for any model to capture the trajectory reasonably well is that the value of those parameters needs to be correctly estimated. If you get the parameter values wrong, then your prediction will go wrong. And in the previous model, the way they found the parameter value was by following a method that was being done typically 100 years ago when the SIR model was invented. They would take the very initial days of the pandemic, when the number of infected was very tiny. So, almost everyone is uninfected. This allowed for certain approximations of the equations, and at that point, you could estimate the value of the parameters, in the very early days. So, once you have that and you assume that the parameter values for a particular pandemic don’t change, then you’ve got it. Now, where this approach went wrong was that the parameter values clearly changed because of the lockdowns. One of the parameters captures how fast it is spreading. When you put a lockdown, of course, that parameter value changes. But then, once you go past the early days, those initial approximations won’t work. So, you cannot get a handle on the change parameter. So, you make a guess, and if you guess wrong, then you are wrong. Therefore, we decided that our model has to be of a kind where you can learn the parameter values from the data. What is the current parameter value? The current data should tell you. There should not be any guesswork. So, that was the required condition or constraint under which we worked. Fortunately, we got a model where we could learn the parameter values from the reported data.

This was fine, but we asked ourselves, “Does it predict correctly?’’ This was August. Our model predicted that the infection peak would be in September. We waited until September, and it actually did peak in September. Then we felt confident that this looked like the correct model. We then recommended our model to DST and made it public. We, of course, further refined it later.

It was a very interesting experience working on this. By the way, implementing this model involves taking the latest data, which is required to recalculate the value of the parameter. This requires a big computer code that I wrote myself. But then, I should say the modelling community had a very strange reaction to this. They said if your parameter values keep changing, then that is not a model. But the parameter values are actually changing. But the community seemed to be stuck with a method from a hundred years ago: the parameter value is fixed, and if you can’t get it, then you make a guess. We wanted to learn from the data and not make a guess. So a lot of criticism also came out against us, which became like a big cacophony when we got the second wave wrong.

In March 2021, the second wave was starting, and because of the new Delta variant, the parameter values changed again. Now, parameter values don’t change overnight. There is a period during which it changes. So April was that period. I was running the model every day, and I was learning from the data. I would get a different value of the parameter every day. It was changing. Now, the predictions from that learned value of continuously changing parameters were also changing continuously. It was an embarrassing situation for us. Every day, we were changing our predicted dates and values for the peak. Keep in mind that at that time, we still didn’t fully understand the model. So, at some point, I realized that we had to wait until the value of the parameter stabilizes. Fortunately, the model had a way of checking if the parameter value had stabilized. So I just waited. And by around the 25th of April, I realized that the last five days’ parameter values were stable. Then the model said that it would peak around the 8th of May. And that is actually when the peak did happen.

But in the interim, during a 2–3-week period in April/May, things went haywire and it led to huge criticism, “These guys don’t have a clue what they are talking about. Look! Every day their prediction is changing.’’ There were many academicians who criticized this. And it was puzzling for me. Yes, we were wrong initially. And I actually openly admitted to it. But it is because things were changing. We had no way to predict what the final stable value of the parameter would be. If it is changing, the model is useless. We can only predict when it stabilizes. So, yes, it led to quite a bit of a public show of unhappiness. But it is what it is.

It is good to know the circumstances under which you got into this.

MA: I think the community needs to grow up a bit. You have to have an open mind about new ideas. You can’t stay stuck in the old ways just because earlier pandemics never had this kind of control applied. This was the first pandemic where serious controls were applied over an extended period of time. So none of your older ideas or models worked. In fact, there was a study on a bunch of models in the US. From predicting that the COVID pandemic will peak in the future at a very high level, causing utter chaos, to another model saying it has already peaked and is going to crash now, a whole range of predictions were there because everyone was just kind of guessing the parameter values. And from whatever I saw, while our model was certainly not perfect, it was the most accurate of all the models that were available at that time. In fact, I applied that model to a whole bunch of countries, including the US. We could predict US peaks well in time as well.

Can your model be used for all the variants?

MA: Yes. Every variant changes some parameter. So you can predict once you learn the new parameter. When Omicron came, it kind of blew up. The key thing about Omicron was that it was three times more infectious than Delta. Our model could compute these things. Delta was two times more infectious than the original variant that came to India. Omicron was three times more infectious than Delta and spread very rapidly. That is what we saw. Fast rise and fast fall.

So would you say that the novelty of your model is in allowing for parameters to change?

MA: Yes. What is unique about our model is that the current value of parameters can be learned from the daily new infection data, provided the parameter values are stable. So, I need about, let us say, two weeks of data, to properly learn the current value of parameters. But in those two weeks, the parameter values should not be changing. That is all. It was a very good experience in a very different domain that I had never worked on before. There were some very interesting incidents, too. Like when Omicron was shooting up massively in January 2022, I was doing simulations for various cities. So, around the 10th or 11th of January, I got a call from the health minister of Maharashtra saying, “It is spreading very rapidly, should I put a lockdown?’’ I said, “By the time you put a lockdown and it becomes effective, this would have peaked.’’ Our model was predicting the 14th of January as the date of the peak. So I asked him not to bother, to which he agreed. He didn’t put a lockdown. I was a little apprehensive. But fortunately, on the 14th of January, it indeed peaked and came down after that. That was good.

Are you now interested in modelling?

MA: I am interested, sure. I enjoyed it while I was doing it. I do not do it anymore. In fact, I do not do any research anymore, I think, sitting in this chair.

What is your day-to-day routine like as the director, and how are you able to manage your administrative responsibilities with your academic pursuits?

MA: I manage it by doing no research and no academic pursuits, because there is no time. There are always 20 things to do, of which I can do about 10. The rest, I just cannot do.

Do you miss your academic pursuits?

MA: Yeah, I miss two things. One is time for thinking, and the other is teaching. I really enjoy teaching. I especially enjoy teaching core courses like discrete math, algorithms, and other similar courses.

Are there things that you feel satisfied about, sitting in the chair, having done something for the institute?

MA: Hopefully, I will be satisfied four years from now. Today, I completed one year. So, four more years to go. I don’t think that I have achieved anything substantial in the past year. So, I can’t claim to be satisfied as yet. But we will see how it goes in the future.

Congratulations on completing one full year! Can we ask about the kind of changes you are trying to bring?

MA: One of the key things I am trying to do, which is somewhat paradoxical, given my own research, is to orient the institute towards not only doing research, but also producing useful technologies which impact life outside, for the common citizen. Paradoxically, my own research is as far away from impacting any life as you can imagine. But there is another part of me that has been feeling very strongly about the lack of impactful work, impactful technologies, created by Indian academia, which is very unusual. You know, across the world, when you see, US, Europe, China, Russia, or anywhere else, the top academic institutions are the ones that do very good research, and they also produce very impactful technologies. India is probably a unique large country, where our top academic institutions only do research, only publish papers—high-quality papers, sure—but if you ask what impactful technologies have come out of India, since independence, you will only have a handful. So that is a lacuna in our systems, which needs to be addressed, and I have been a big proponent of that. Since I come from a domain that has nothing to offer in this space, I took up the challenge five years ago and set up a major initiative in cybersecurity at IIT Kanpur. That is now flourishing, and a lot of interesting technologies have come out of it. So I have that experience, and I also really enjoyed that part. That is one of my major focus areas as the director, to help and maybe convince our faculty to think about this aspect as well.

But since you are a theoretician, you have to convince people who are not from your community. How do you go about something like that?

MA: Well, it’s not easy. What is also true is that—and many people are realizing it—today the government is willing to give very large sums of money to academia if we promise hard deliverables. Therefore, it becomes easier to convince people. Of course, many may still not be interested, but that is fine; the key thing is that many should be interested. And, even if 20 percent of the faculty are interested, that’s good enough.

So is it about changing mindsets?

MA: Changing mindsets, yes, but it is also about facilitating, because it’s not easy. You can create a technology in the lab, but to make it deployable, you have to pass through a lot of challenges, including interacting with users to understand whether they will use this at all or if they want to use it, in what way would they want to use it, in what form and such. So a lot of back and forth has to be done, which faculty in academia are not trained for. So there is a need to handhold them in that journey. That’s something that I’m setting up here, an office which will help the faculty in this aspect.

About training, many scientists usually find administrative roles to be very challenging. It comes in the way of their research, and more often than not, they do not get the training required to do administrative jobs. What can be done about this, especially for scientists who will go into leadership positions?

MA: You know, administration, from what I’ve seen, is mostly common sense. You need experience, definitely. The way I acquired training for being an administrator is that I became the head of the department, then I became the dean, and then I became the deputy director. So I had several positions of responsibility. And that, I think, is more than sufficient. You have to have a commitment. You should be able to give time, you should have passion, and you should apply common sense. No other, you know, fancy trainings at IIMs, or such places, are needed for this.

administration, from what I’ve seen, is mostly common sense

How do you see the future of computer science evolving in the Indian context?

MA: Well, these days, you know everything is getting swamped by AI. You don’t hear anything except AI. In computer science, particularly, everyone seems to be doing AI. So this is both good and bad, in the sense that it is a new discipline. Over a period of time, my sense is that this will fork out of computer science just as computer science forked out of electrical [engineering]. In the same way, AI will fork out of computer science, and it will acquire its own identity. Once that happens, computer scientists can bring the focus back to computer science and its principles. These days, it’s only AI. The number of changes it is bringing, already, is staggering. The ability that AI tools have these days is just mind-blowing. They will dramatically change the way we work.

Do you think CS will face funding challenges as a result?

MA: Yeah, the non-AI part of CS is likely to face challenges. Firstly, right now, computer science is the most popular branch for students. I don’t think it will remain the same once AI forks out of it. AI will become the most popular branch, and the funding will also primarily go into AI.

From joining here as a student to now becoming the director of this institute, you have seen the institute from almost all possible positions. Can you tell us about your journey and how this has shaped you as a person, a teacher, and a scientist?

MA: Yeah, the institute has had a profound effect on me. One of the key characteristics of IIT Kanpur has been its openness. There are almost no hierarchies here, very flat. From the senior-most professors to the junior-most, they are all treated equally. Over time, of course, some hierarchy has started emerging. Earlier, when the system was smaller, people knew each other, so it was a lot easier to connect with each other. Now, the system is bigger, so it is slightly more hierarchical, but still, it is still very flat, especially when compared to other institutions in India. And that is something that really makes you comfortable, even if you are a young person in the system.

the institute has had a profound effect on me

And more so as a teacher, perhaps.

MA: Yes, but also as students. We have had student participation right from the beginning in all decision-making. Now, of course, many other institutions have adopted that, but it is still probably only in name. Here, it is practiced. A student president can walk into my office any day and argue with me about something that needs to be done. When I say argue, I don’t mean in the sense of sitting in a dharna, but sitting across the table with me and arguing about whether something is correct. I have had to concede at times, and we have changed things accordingly. Right from the beginning of the institute, this is the culture that got created, and it has endured.

You joined here in 1982. So, you have been here for almost 43 years, essentially. Out of the 65 years since the inception of IIT Kanpur, you have been here for two-thirds of its existence. How does it feel?

MA: Well, it is my home. I do not have any other home. My Allahabad home is gone.

What is your opinion on the special role that IIT Kanpur can play in the near future, considering all the impact that it has had so far?

MA: We are in a very unique position, being in the state of UP [Uttar Pradesh]. Given the size of the state, it’s about a sixth of the whole country in terms of population, it is way behind more advanced states like Gujarat, Tamil Nadu, Karnataka, etc. So, for the growth of the country, it’s very important and essential that UP also grows; otherwise, we will hold the country back. And today, the government of the state is very keen on growing the state. A lot of this growth will come from technology, IT, etc, and that’s where IIT Kanpur can contribute. We are the most pre-eminent technology institute in the state. We have the necessary expertise to provide a lot of technological input and support. If we do it well, it will help the state, and we will help ourselves as well.

Thank you so much for making the time for us. It was absolutely delightful.

Acknowledgement: We thank Shilpa Gondhali for her kind help with transcribing the recorded interview.\blacksquare

Footnotes

- Zhi-Zhong Chen and Ming-Yang Kao, “Reducing randomness via irrational numbers.’’ In Proceedings of the twenty-ninth annual ACM Symposium on Theory of Computing, 1997, pp. 200–209. ↩

- The degree of an algebraic number m is the minimal degree of a polynomial that has the number m as a root. For \sqrt{2} the degree is 2, as it is a root of x^2-2=0. ↩

- This function does not have an elementary antiderivative, even though it is integrable over the real numbers. ↩